Frontline Learning Research Vol.8 No. 6 (2020) 59

- 76

ISSN 2295-3159

1University of Hagen, Germany

2 University of Koblenz-Landau, Germany

Article received 20 April 2020 / revised 31 July/ accepted 2 October / available online 4 November

Regulation of distance to the screen (i.e., head-to-screen distance, fluctuation of head-to-screen distance) has been proved to reflect the cognitive engagement of the reader. However, it is still not clear (a) whether regulation of distance to the screen can be a potential parameter to infer high cognitive load and (b) whether it can predict the upcoming answer accuracy. Configuring tablets or other learning devices in a way that distance to the screen can be analyzed by the learning software is in close reach. The software might use the measure as a person-specific indicator of need for extra scaffolding. In order to better gauge this potential, we analyzed eye-tracking data of children (N = 144, Mage = 13 years, SD = 3.2 years) engaging in multimedia learning, as distance to the screen is estimated as a by-product of eye tracking. Children were told to maintain a still seated posture while reading and answering questions at three difficulty levels (i.e., easy vs. medium vs. difficult). Results yielded that task difficulty influences how well the distance to the screen can be regulated, supporting that regulation of distance to the screen is a promising measure. Closer head-to-screen distance and larger fluctuation of head-to-screen distance can reflect that participants are engaging in a challenging task. Only large fluctuation of head-to-screen distance can predict the future incorrect answers. The link between distance to the screen and processing of cognitive task can obtrusively embody reader’s cognitive states during system usage, which can support adaptive learning and testing.

Keywords: distance to the screen; multimedia reading; motor-cognition dual tasking; task difficulty; eye movement

Employing dual-task paradigms, previous research has provided evidence that posture control is associated with cognitive processing (Chen et al., 2018; Chong et al., 2010; Kerr et al., 1985; Lajoie et al., 1993; Langhanns & Müller, 2018; Patel & Bhatt, 2015; Stelmach et al., 1990; Woollacott & VanderVelde, 2008). According to the Multiple Resource Theory (Wickens, 2002), when the cognitive task is demanding, fewer attentional resources can be allocated to postural control, leading to degraded performance in postural control. Regulation of distance to the screen is one potential indicator of the quality of postural control (Balaban et al., 2004; Bonnet et al., 2017; Kaakinen et al., 2018; Qiu & Helbig, 2012). It is thus likely that distance to the screen can indicate high cognitive demand.

The links between postural control and cognitive processing motivated our interest in testing the potential association of distance measures and state of cognitive processing. The current study used a still seated posture as the postural task. First, even highly practiced postures and seemingly effortless actions such as sitting require some cognitive processing. The classical setting of the studies on cognitive-postural interference was to ask participants to stand or walk on a balance platform and perform a cognitive task (e.g., Donath et al., 2015; Maylor & Wing, 1996; Pellecchia, 2003; Schaefer et al., 2015). Participants should keep the balance in a range to ensure not to fall. High cognitive demand was reflected by large variance of the set point of the position (e.g., center of pressure) and the actual value of the position. However, a recent study revealed that maintaining a seated posture while performing a cognitive task caused the largest decrements in the cognitive task – compared to relaxed lying and slight movement (Langhanns & Müller, 2018). In a study with children (Igarashi et al., 2016), maintaining the seated posture was degraded when accompanied by a concurrent demanding cognitive task. Hence, maintaining the seated posture was selected as the postural control task in the study, as it requires cognitive resources and seating is one of the most common body positions while learning.

The research on postural stability (i.e., fluctuation of set point and the current position) observed contradictory findings on how people regulate the postures under demanding cognitive processing. Some researchers claimed a significant increased distance deviation of sway from front to back with a concurrent difficult cognitive task (Kerr et al., 1985; see a review, Woollacott & Shumway-Cook, 2002). This statement has been supported by a recent study (Gaschler et al., 2019), suggesting that increased variability of responses (i.e., where exactly the correct response panel is hit on a touch screen) can be influenced by the high cognitive load in a dual-task paradigm. Yet, other researchers reported decreased fluctuation of head-to-screen distance when people conducted a visual search task demanding precision compared to a control visual task (Balaban et al., 2004; Bonnet et al., 2017; Kaakinen et al., 2018). These studies demonstrated that there is a synergy between control of postural and oculomotor behaviors in precise visual search. The reduced postural sway facilitates the efficient control of eye movements (cf. Balaban et al., 2004; Kaakinen et al., 2018). It is thus worthwhile to investigate the change of the fluctuation of head-to-screen distance when people sit still and perform a cognitive task.

In front of the screen, one has to keep the head in such a position that one can see well and one does not tilt back or forth. We hence define the regulation of distance to the screen as the changes or shifts of the set point, which is the distance of the middle of the eyes to the screen. Previous studies have shown that the closer head-to-screen distance (i.e., changes of the set point of the posture) can indicate high cognitive engagement or highly focused attention (Balaban et al., 2004; Bonnet et al., 2017; Kaakinen et al., 2018; Qiu & Helbig, 2012). The head-to-screen distance becomes smaller, when texts segments are task-relevant (Kaakinen et al., 2018), and when tasks are challenging (e.g., identifying planes in the Warship Commander Task in Balaban et al., 2014; a difficult search task, “Where is Wally” in Bonnet et al., 2017; two-digit addition task in Qiu & Helbig, 2012). However, the studies did not directly scrutinize whether regulation of distance to the screen can be influenced by task difficulty, and whether regulation of distance to the screen can predict the future answer accuracy.

The current study used multimedia learning as the cognitive task due to three motives. First, multimedia learning involves the visuospatial sketchpad (cf. Baddeley, 1986), which has been shown to interfere with postural control (e.g., Chen et al., 2018; VanderVelde, Woollacott & Shumway-Cook, 2005). Multimedia reading refers to reading with texts and pictures. The integrative processing of texts and pictures is proposed to take place in a verbal channel and in a pictorial channel according to different theories: Dual-Coding theory (Paivio, 1986), Cognitive Theory of Multimedia Learning (Mayer, 2009), and Integrative Model of Text-Picture Comprehension (Schnotz & Bannert, 2003). The theories share the common view that verbal processing in the verbal channel requires constructing the propositional representation of the text by making references of the objects and events being described in the text to the relevant world knowledge (i.e. situational representations, Kintsch & van Dijk, 1985; mental model, Johnson-Laird, 1983). The pictorial processing in the pictorial channel requires constructing the mental representations by structure mapping based on analogies between the external and internal depictive representations (Gentner, 1989; Knauff & Johnson-Laird, 2002; Sims & Hegarty, 1997). During multimedia processing, there are continuous interactions between verbal processing and pictorial processing in terms of mental model construction and mental model inspection (Schnotz & Wagner, 2018; Zhao et al., 2014; Zhao et al., 2020).

Dual-task studies have provided evidence that the comprehension of text-picture blended materials draws on more resources in the visuospatial sketchpad than does the comprehension of text-only materials (Gyselinck et al., 2000; Kruley et al., 1994). Potentially, the visuospatial sketchpad is demanded in the perceptive analysis of pictures, and in the basic performance of mapping texts and pictures (cf. Gyselinck et al., 2002). Given that, pictures convey the meaning based on visual trace (e.g., lines, blob), the visuospatial sketchpad can be responsible for formatting and storing visual and spatial information (cf. Gyselinck et al., 2002). A further study provides evidence that the spatial representation rather than the visual aspect plays an essential role in constructing and inspecting the mental models (Knauff & Johnson-Laird, 2002). As mentioned earlier, spatial cognitive tasks interfere significantly with postural regulation (e.g., VanderVelde, Woollacott & Shumway-Cook, 2005). Compared to the non-spatial cognitive tasks, spatial tasks caused significant decrease in postural control (Chen et al., 2018). In order to investigate the cognitive-postural interaction, we employed a multimedia reading task, which relies on visuospatial processing.

Our usage of multimedia learning material contrasts with previous studies using cognitive tasks in cognitive-postural interference that come in small units, such as arithmetic calculation (Igarashi et al., 2016) or backward counting (Schaefer et al., 2015) or memorizing words (Donath et al., 2015). We opted for a task demanding the extraction and integration of information units from different sources as it can be found in computerized tutorials in and outside the classroom. We aimed at testing whether difficulty of more complex learning tasks involving reading and picture processing has an impact on postural stability.

Furthermore, displaying multimedia materials in different reading processing can validate the generalization of the link between distance to the screen and cognitive processing. In accordance with McCrudden and Schraw (2007), learners initially use a semantic coherence strategy as a default orientation for global understanding, and then switch to relevance-oriented processing after having constructed the initial mental model to meet the task demand. In multimedia learning, Zhao, Schnotz, Wagner and Gaschler (2020) have defined the phase of initial task-independent, general coherence-oriented processing as initial mental model construction. The later phase of selective processing (to adapt the mental model according to the question that should be answered) is defined as adaptive mental model specification. There are sequential constraints between both processing modes, as initial mental model construction is required before adaptive mental model specification can occur.

In the current study, we analyzed distance to the screen when participants had the multimedia material and the question to be answered available. We differentiated two conditions that were identical with respect to the elements on the screen (question and multimedia material), but differed with respect to prior engagement with the material. In the question-after-material condition, participants had previously been granted the opportunity to process the material without yet knowing what the question would be. In this condition, participants should engage in initial mental model construction followed by adaptive mental model specification once the question is communicated. In the question-with-material condition, the question comes first and the material is displayed later. In this condition, the mental model should be constructed to specifically cover information required by the question. These different reading conditions are considered in the current study in order to generalize the findings on the association of distance measures and cognitive processing to different variants of engaging with multimedia materials.

The posture control indicator (i.e., distance to the screen) may be used to assess when learners are challenged by the material. In multimedia learning learners can engage with the material for many seconds to minutes. Rather than waiting until finally the response might make explicit that scaffolding would have been needed, distance to the screen could be used as an online-measure of processing that might trigger support offers by the computerized system. Educational systems have used user-modelling techniques to track user’s behavior and to analyze the learning progress to personalize the human-system interaction (Scheiter et al., 2019). For instance, AdELE (Garcia-Barrios et al., 2004) used real-time eye tracking of fixations and saccades to establish a user-profiling database. The system can suggest adaptive information in proper media to match the user’s behavior. In the current study, head-to-screen distance is being tracked as a by-product of eye tracking. Yet, such measures might be made available in interaction with everyday electronic media. Current devices (e.g., eye trackers, Nintendo Wii, laptops, tablets and smartphones) have cameras to track the distance of users’ faces and the screen (Kaltwasser et al., 20017; Roig-Maimó et al., 2018). As tracking the distance will be possible at low cost with standard hardware, we need to know whether this measure is useful to unobtrusively capture challenges encountered by the learner. If there is a link between distance to the screen and the processing of a cognitive task, distance measures can help to obtrusively track users’ cognitive states during system usage, enabling the system to adapt to users’ current cognitive load.

This may be especially useful in children as they are still developing the postural control system (Rival et al., 2005; Schmid et al., 2005, 2007). Increased head and body sway in children compared to adults were observed, when asked to stand on a platform with eyes open or closed (Sakaguchi et al., 1994). Reading particularly seemed to affect postural control. When children stood on a balance platform, reading aloud and standing still revealed more body sway than a counting backward task (Blanchard et al., 2005). Under cognitive-postural dual tasking, children compared to young adults had a larger decline in performance on postural control (wide stance vs. tandem Romberg stance) while concurrently performing a difficult (visual working memory) task (Reilly et al., 2008). The studies revealed that the attentional resources of the concurrent cognitive task could affect the postural control in children. As this work suggests that the detrimental effect of load on regulation of distance to the screen seems to be greater in children than in adults, we were confident to use a sample of children for this study.

Taken together, this study aimed to scrutinize (a) whether regulation of distance to the screen (i.e., head-to-screen distance and fluctuation of head-to-screen distance) can potentially help to unobtrusively infer whether a learner is currently engaging in a challenging task, (b) whether regulation of distance to the screen can predict the future answer accuracy. If this were the case, one would not need to await the classification of an answer as an error, but could receive extra support during the task. Alternatively, adaptive testing (cf. Wainer et al., 1990) can be employed by presenting learners tasks tailored from a range of difficulty corresponding to their level of proximal development.

Two reading conditions were included to validate the relationship of distance to the screen and cognitive processing. The question-after-material condition (material first) can possibly lead to lower head-to-screen distance, due to the selective processing in adaptive mental model specification (cf. Kaakinen, 2018). The question-with-material condition (question first) can possibly have large variance of head-to-screen distance over the course of time, due to the involvement of initial mental model construction and adaptive mental model specification.

In the current study, children were told to maintain the seated posture while reading multimedia materials at various difficulty levels. An eye tracker was used to detect the distance to the screen. Their engagement in demanding tasks and the upcoming response accuracy are expected to be related to head-to-screen distance and fluctuations of head-to-screen distance. Accordingly, we proposed the following research questions and predictions.

Research question 1: Can head-to-screen distance and

fluctuation of head-to-screen distance allow inferring whether

people are engaging in a challenging task?

Prediction 1: With the increase of task difficulty, the

head-to-screen distance decreases.

Prediction 2: With the increase of task difficulty, the

fluctuation of head-to-screen distance increases.

Research question 2: Can head-to-screen distance and

fluctuation of head-to-screen distance allow predicting the

upcoming answer accuracy?

Prediction 3: Prior to incorrect responses to a question, the

head-to-screen distance decreases.

Prediction 4: Prior to incorrect responses to a question, the

fluctuation of head-to-screen distance increases.

The data were re-analyzed from a published article (Zhao et al., 2020). More details concerning the method can be found in the abovementioned article.

One hundred forty-four secondary school students without any motoric impairments (Mage = 13.0 years, SD = 3.2; 72 females, 72 males) participated in the experiment. All participants had normal or correct-to-normal vision. A post-hoc power analysis was conducted using G*Power 3.1 (Faul et al., 2009) for testing the difference between easy, medium and difficult questions in question-after material and question-with material reading conditions using a repeated-measures ANOVA. Results indicated that a sample size of 144 students would allow to detect an effect size of ηp2 = 0.25 at α = 0.05 with a statistical power (1− β) = 0.99.

Participants were asked to perform a cognitive task, while maintaining a constant seated posture (60 - 65 cm from the screen). The cognitive task was to read six text-picture materials and answer one question per material. The text-picture blended materials were selected from authentic school textbooks in Geography and Biology from grades 5 to 8 in Germany. We used a within-subject design, in which children were compared with themselves in three difficulty conditions: reading and solving (1) easy questions (2) medium questions (3) difficult questions combined with two reading conditions: question-after material and question-with material. We could thus counterbalance the individual differences, such as vision, interest or prior knowledge.

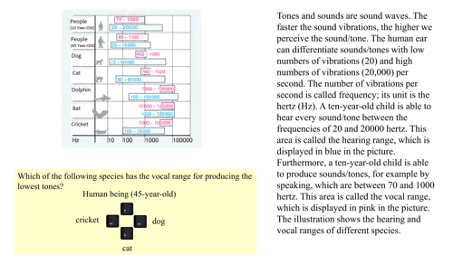

An example of the text-picture materials is presented in Figure 1. Global understanding and question answering require students to integrate the textual and pictorial information. Three questions at easy, medium, and difficult levels were produced based on the text-picture integration requirements (cf. Wainer, 1992). An easy item requires only element mappings between text and picture. For example, “Which of the following species is able to perceive tones/sounds at 120,000 hertz?” Students have to read the text; find out that the hearing range is in blue; search for 120,000 hertz in the picture; find “dolphin and bat”; and find the correct answer, “bat”. A medium-complexity item requires mappings of simple relations. For instance, “Which of the following species has the vocal range for producing the lowest tones (human being, 45-year-old/ cat/ dog/ cricket)?” Students need to read the text and find the vocal range is in pink. Then they should search for the vocal ranges for people (45-year-old), cat, dog, and cricket. Then they should compare the ranges with the x-axis and find “dog” is the correct answer. The difficult item requires mappings of more complex relations between the text and picture. For instance: “Which of the following four species is able to hear tones below 100 hertz as well as to produce tones below 1000 hertz and above 1500 hertz?” Students need to integrate information from the text and information from the picture via color-coding. Then they can answer that “cat” is the one who can hear tones below 100 hertz (numbers in blue) and can produce tones below 1000 hertz and above 1500 hertz (numbers in pink). Each participant received one out of three questions per text-picture unit according to a Latin Square so that each worked on easy, medium and difficult items without re-encountering the same material.

Figure 1. Example of a cognitive reading task on the

topic auditory ranges (translated from German). For each animal,

the numbers above are in pink, which are the vocal range, and the

numbers below are in blue, which are the hearing range.

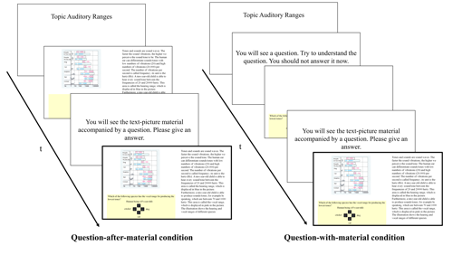

To test the effect of cognitive load on the distance to the screen, we implemented two processing variants (see Figure 2). In the question-after-material condition, participants first received a text-picture unit without any question. This aimed to stimulate initial mental model construction only, because no specific task was shown. Then they were presented the same text-picture unit just seen before, but now together with a delayed question. This condition was meant to stimulate adaptive mental model specification, as participants were expected to engage in adapting their mental model to the requirements of the specific question. Additionally, we implemented a question-with-material condition, in which a specific question was presented first. It was followed by the corresponding text-picture unit, while the question remained visible. This reading processing allows participants to combine initial mental model construction and adaptive mental model specification. We only report the comparisons between the last phase in question-after-material and question-with-material conditions that were identical to the elements on the screen but differed with prior engagement with the material.

Figure 2. Reading conditions in the study. This study only

focused on the distance to the screen in the last phase in

question-after-material condition and question-with-material

condition (marked in bolded frames), as item answering was only

involved in these phases.

After obtaining informed consent from the parents, each child was tested individually in a lab environment. First, participants’ verbal and spatial intelligence were tested (Heller & Perleth, 2000). Then they were told to sit still in front of the Tobii XL60 24-inch eye tracker operating at 60 Hz temporal resolution. The text-picture units were self-paced reading tasks. Students pressed the space key to turn pages, and pressed the arrow keys (up/down/left/right) to give answers. No feedback to the answers was provided.

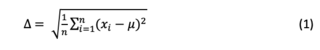

The accuracy of responses was reported to indicate the performance of the cognitive task. Two distance parameters were used to indicate the performance of the postural task. The head-to-screen distance refers to the distance between the middle point of both eyes and the screen. The distances of both eyes to the screen were exported directly from the Tobii Studio, which is a software provided by the eye tracker company. As the cognitive demands in tasks can have momentary changes as readers proceed through the material (Hyönä & Niemi, 1990; Kaakinen et al., 2018), we examined the time course changes of the head-to-screen distance during a reading task (i.e., from the appearance of the reading material until giving an answer to). Specifically, we divided the total fixation duration of each participant for each reading material into five equal intervals. Each quintile is 20% of the overall fixations (cf. Lindner et al., 2017; Zhao et al., 2020). The advantage of using this method is to rule out the effect of individual reading speed. In the current study, the distance to the screen was compared across five time segments of engaging with an item (i.e., the first 20% of recorded data to the last 20% of eye-tracking data). The fluctuation of head-to-screen distance is the standard deviation of the distance to the screen across the (five) quintiles. In equation (1) xi refers to the distance to the screen in one quintile, and μ refers to the mean of the distance to the screen in numbers of quintiles (n). The fluctuation of head-to-screen distance is the square root of the sum of the squared differences of each quintile from the mean.

As a manipulation check, we first report the cognitive load of the difficulty level of items. Then we test the influence of item difficulty and future response accuracy on the distance to the screen and on the fluctuation of the distance to the screen (i.e., deviation of distance to the screen across five quintiles).

The cognitive load of the item difficulty was verified by the accuracy rate and spatial distance between successive gaze-points. That is, we tested whether the difficult items demanded indeed more cognitive load compared to easy and medium items. A repeated-measures ANOVA on the proportion of correct answers with 3 (item difficulty: easy vs. medium vs. difficult) × 2 (reading condition: question after material vs. question with material) within-subject factors showed only a significant main effect of item difficulty, F(1.88, 269.11) = 27.20, p < .001, ηp² = .16 (here and elsewhere we applied Greenhouse Geisser-correction when appropriate). Easy items (M = 72.6%, SD = 34.9%) had a higher accuracy rate than medium items (M = 51.4%, SD = 39.2%), t(143) = 5.52, p < .001, d = .46, and difficult items (M = 42.4%, SD = 36.6%), t(143) = 7.48, p < .001, d = .62. There was no significant difference between medium and difficult items, t(143) = 1.92, p = .056, d = .16. No other effect was shown: reading condition, and item difficulty × reading condition, Fs < 1, suggesting that presenting questions before or after the materials does not affect response accuracy.

Shorter saccade length is suggested to indicate higher cognitive load (cf. Debue & van de Leemput, 2014). We computed the Euclidean distance of successive gaze-points per 60 ms, as we used a 60 Hz eye tracker. The 3 (item difficulty: easy vs. medium vs. difficult) × 2 (reading condition: question after material vs. question with material) ANOVA on the saccade length revealed a main effect of item difficulty, F(1.89, 270.65) = 4.21, p = .02, ηp² = .03. The paired-samples t-test in two-tails showed that easy items (M = 12.25 pixel, SD = 3.49 pixel, t(143) = 2.40, p = .02, d = .20) had longer saccade lengths than medium items (M = 11.86 pixel, SD = 3.22 pixel) and difficult items (M = 11.83 pixel, SD = 3.25 pixel, t(143) = 2.37, p = .02, d = .20). No difference was found between medium items and difficult items, p = .85. This suggests that the easy items required less attentional resources than medium and difficult items. The main effect of reading condition, F(1, 143) = 9.95, p = .002, ηp² = .07, suggested a longer successive length in the question-with-material condition (M = 12.29 pixel, SD = 3.40 pixel) than in the question-after-material condition (M = 11.67 pixel, SD = 3.57 pixel). This was not surprising, as participants have read the item first in the question-with-material condition. They hence searched and selected task-related information in a top-down manner, varying the attended location quickly over a wide range (cf. Theeuwes & Belopolsky, 2010). The accuracy rate and saccade length therefore confirmed that the cognitive load of answering easy items was lower than of difficult items.

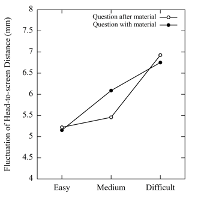

Panel A

Panel B

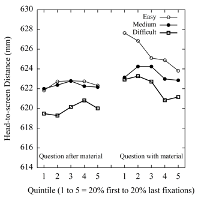

Figure 3. The head-to-screen distance in millimeter in

five quintiles in Panel a, and the fluctuation of head-to-screen

distance in millimeter (averaged within-subjects standard

deviation distance of five quintiles) in Panel b during

question-after-material and question-with-material conditions,

when easy, medium and difficult items were performed.

3.2.1. Head-to-screen distance

The average head-to-screen distance for all participants had a range from 528.5 mm to 692.3 mm (M = 622.7 mm, SD = 24.2 mm). The 3 (item difficulty: easy vs. medium vs. difficult) × 2 (reading condition: question after material vs. question with material) × 5 (quintile) within-subjects repeated-measures ANOVA on head-to-screen distance revealed a significant main effect of item difficulty, F(2, 286) = 7.54, p = .001, ηp² = .05 (see Figure 3a). It confirmed Prediction 1 that the concurrent requirement of the difficult task led to a decrement in head-to-screen distance. Participants tended to be closer to the screen with difficult items (M = 621.1 mm, SD = 24.9 mm) compared to medium items (M = 622.9 mm, SD = 24.9 mm), t(143) = -2.24, p = .03, d = .19, and easy items (M = 624.1 mm, SD = 24.1 mm), t(143) = -3.68, p < .001, d = .31. There was no significant difference between easy and medium items, p = .10. The interaction effect of reading condition × quintile, F(3.17, 453.03) = 3.06, p = .02, ηp² = .02, indicated a larger distance discrepancy across five quintiles when participants read the material only once (question with material). No other effect was revealed: reading condition, F(1, 143) = 3.73, p = .06, ηp² = .03; quintile, F(3.26, 466.71) = 1.48, p = .22, ηp² = .01; item difficulty × reading condition, item difficulty × quintile, reading condition × item difficulty × quintile, Fs < 1.

3.2.2. Fluctuation of head-to-screen distance

The 3 (item difficulty: easy vs. medium vs. difficult) × 2 (reading condition: question after material vs. question with material) ANOVA was performed on fluctuation of head-to-screen, which was the within-subject standard deviation across the five quintiles for each person under each reading condition. A main effect of item difficulty was revealed, F(1.84, 262.53) = 4.67, p = .01, ηp² = .03. Participants had larger body sway in the anteroposterior direction for difficult items (M = 6.8 mm, SD = 8.0 mm) compared to easy items (M = 5.2 mm, SD = 4.9 mm), t(143) = -2.81, p = .006, d = .23, which confirmed Prediction 2 (see Figure 3b). There was no significant difference between easy items and medium items (M = 5.8 mm, SD = 5.4 mm), p = .20, and between medium items and difficult items, p = .07. No other effect was revealed: reading condition, item difficulty × reading condition, Fs < 1.

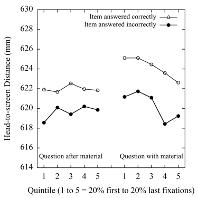

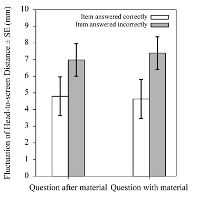

Panel A

Panel B

Figure 4. The head-to-screen distance (Panel a) and the

fluctuation of head-to-screen distance (Panel b) during

question-after-material and question-with-material conditions,

when items were answered correctly and incorrectly. The error bars

in Panel b are the standard errors of the mean.

While in the above analysis of task difficulty, all participants were included as each participant read materials at easy vs. medium vs. difficult levels, this approach was not feasible when predicting future response accuracy. Here we used the data from 78 participants out of 144, because they had both correct and incorrect responses in two reading conditions allowing for a within-subjects analysis. We could thus compare how the same participant regulated the head-to-screen distance when s/he answered the questions correctly vs. incorrectly.

3.3.1. Head-to-screen distance

As shown in Figure 4a, 2 (future answer: correct vs. incorrect) × 2 (reading condition: question after material vs. question with material) × 5 (quintile) within-subjects repeated-measures ANOVA on head-to-screen distance showed no significant effect of future answer, F(1, 77) = 1.93, p = .17, ηp² = .02. No other effect was found, quintile, F(2.88, 221.65) = 1.32, p = .27, ηp² = .02; reading condition × quintile, F(3.13, 241.05) = 2.58, p = .052, ηp² = .03; future answer × reading condition × quintile, F(3.06, 235.87) = 1.57, p = .20, ηp² = .02; reading condition, future answer × reading condition, future answer × quintile, Fs < 1. The results disconfirmed Prediction 3 and indicated that head-to-screen distance cannot differentiate the future correct or incorrect answers.

3.3.2. Fluctuation of head-to-screen distance

We conducted the 2 (future answer: correct vs. incorrect) × 2 (reading condition: question after material vs. question with material) ANOVA on fluctuation of head-to-screen distance. It revealed a main effect of future answer, F(1, 77) = 9.17, p = .003, ηp² = .11, suggesting larger deviations of distance to the screen when the item will be answered incorrectly (M = 7.3 mm, SD = 8.6 mm) than when the question will be answered correctly (M = 4.7 mm, SD = 3.9 mm). It confirmed Prediction 4. No main effect or interaction effect involving reading condition was revealed, Fs < 1, indicating that the fluctuation of head-to-screen distance could predict the upcoming response accuracy regardless of different reading conditions.

Our main goal in this study was to examine whether regulation of distance to the screen (i.e., head-to-screen distance and fluctuation of head-to-screen distance) can be influenced by task difficulty and whether it can predict the upcoming incorrect responses. Using a within-subject design, secondary school children were told to solve multimedia reading tasks at easy vs. medium vs. difficult levels while maintaining a seated posture. We expected a deteriorated regulation of distance to the screen, while children performing a difficult task concurrently.

The major finding is that the concurrent demand of difficult tasks led to a deterioration in regulating the distance to the screen, which is in agreement with studies on cognitive-postural control (Chong et al., 2010; Igarashi et al., 2016; Kerr et al., 1985; Lajoie et al., 1993; Patel & Bhatt, 2015; Stelmach et al., 1990). This suggests that distance to the screen can be employed to monitor scaffolding demands of learners interacting with electronic devices. Moreover, we observed effects of task difficulty even though learners were instructed not to move. This suggests that when learners do not face such a demand, effects of task difficulty on distance regulation might be larger than observed in the current study. Future work will have to detail whether effects will be large enough to engage in individual online diagnostic of task engagement or are limited to scenarios where online analysis of group-averaged data can be applied (i.e. whole classroom engaging in one task with each student working on an electronic device).

In our setup, children tended to move slightly closer to the screen, while seated still and performing a challenging task (difficult vs. medium vs. easy). The findings suggest that reduced head-to-screen distance can reflect the high cognitive load, replicating results in previous studies (Balaban et al., 2004; Bonnet et al., 2017; Kaakinen et al., 2018; Qiu & Helbig, 2012). According to Kaakinen et al. (2018), the underlying mechanism responsible for closer distance to the screen is the high demand of visual precision. On the one hand, the task is difficult in terms of content. On the other hand, one needs visual precision to better recognize or process the information. Previous studies have shown that hard-to-read stimuli (stimuli-background contrast) can cause lack of conflict adaptation (Fritz et al., 2015) and stimulus size is closely linked to the properties of early information processing (Bayer et al., 2012). It is therefore necessary to approach to the screen with difficult tasks. However, larger body sway (i.e., fluctuation of head-to-screen distance) under high cognitive load contradicts with previous findings (Balaban et al., 2004; Bonnet et al., 2017; Kaakinen et al., 2018; Qiu & Helbig, 2012). Likely, the task employed in the current study distinguishes from these studies: text reading without item-answering (Kaakinen et al., 2018), identifying planes in the Warship Commander Task (Balaban et al., 2014), visual search task, “Where is Wally” (Bonnet et al., 2017), two-digit addition task (Qiu & Helbig, 2012). In these tasks, body stability can be beneficial, because the eyes can efficiently locate relevant information.

However, the current study used a cognitive task, in which participants should read multimedia materials and answer questions. Larger body sway under high cognitive load can be interpreted as the dynamic process of searching and locating the task-relevant sentences during the course of reading. In Figure 1, participants only need to map elements via color-coding and compare relatively simple relations (e.g., “Which of the following species has the vocal range for producing the lowest tones?”). In contrast, they should map more complex relations between text and picture by extracting task-relevant information (e.g., “Which of the following four species is able to hear tones below 100 hertz as well as to produce tones below 1000 hertz and above 1500 hertz?”). Accordingly, readers “zoom in” their attention with task-relevant segments and “zoom out” their attention with task-irrelevant segments (cf. Kaakinen & Hyönä, 2014). The adjustment of their attention was more diverse when the cognitive task was difficult compared to medium and easy.

Only the fluctuation of head-to-screen distance (rather than mean head-to-screen distance) can predict the upcoming response accuracy. Incorrect responses can signal cognitive overload, resulting in increased demands in attentional resources (cf. Sweller, 1988). When children are unable to solve a task, their cognitive capacity can be overloaded and they may struggle with the task and feel stressful. According to Doumas et al. (2018), increased emotional stress caused by difficult tasks can lead to larger body sway. We hence assume that the detected larger fluctuation of head-to-screen distance for the upcoming incorrect responses was due to the increased cognitive load and emotional stress. Furthermore, it is possible that the fluctuation of distance to the screen is more sensitive to individual’s cognitive capacity. Specifically, it concerns the difference between each quintile and the mean of the distance to the screen across five quintiles (see Equation 1). Accordingly, the fluctuation of head-to-screen distance is influenced by task difficulty and can predict the upcoming response accuracy.

According to the Multiple Resource Theory (Wickens, 1989), two tasks interfere with each other when they share similar attentional resources, as the processing capacity is limited. Under dual-task paradigm, many studies provide evidence of cognitive-postural interference (e.g., Chen et al., 2018; Kerr et al., 1985; Patel & Bhatt, 2015; Stelmach et al., 1990; Woollacott & VanderVelde, 2008). The studies give clear hints that the mechanisms responsible for regulation of postural control interact with higher-level cognitive systems. In the current study, we found a deterioration in performance on regulation of distance to the screen, when accompanied by a difficult cognitive task. There is possibly a trade-off of attentional resources between the regulation of distance to the screen and the cognitive task. When the cognitive task is attentionally demanding, less attentional resources can be allocated to the regulation of distance to the screen. Consequently, a decline in performance on regulation of distance to the screen takes place. Our findings support the hypothesis that regulation of distance to the screen can be influenced by task difficulty.

Furthermore, several studies have demonstrated that the control and regulation of simple postural tasks such as still sitting require attention. Langhanns and Müller (2018) reported the largest decrements in the cognitive task, while concurrently performing still sitting compared to relaxed lying and slight movement. Igarashi et al. (2016) observed the detrimental effect of the regulation of postural control while children concurrently performing difficult cognitive tasks. The present experiment yielded a declined regulation of distance to the screen, when performing a challenging cognitive task (reading and answering difficult vs. medium vs. easy items). These observations suggest that even seemingly effortless actions such as sitting require some cognitive processing. While sitting, ongoing contraction of muscles is necessary to avoid bending the trunk forward (cf., O’Sullivan et al., 2002, 2006). Other than that, while maintaining the seated posture, unwanted movements should be inhibited, which may trigger higher resource demands than execution of movement (cf. Huestegge & Koch, 2014). With a concurrent cognitive task, the attention to maintain the seated posture can be reduced. Therefore, participants failed to control their seating position. Their body moved closer to the screen and the amplitude of the movement in anteroposterior direction became large. Nevertheless, the effects of distance measures are rather small, as sitting is a relatively stable posture (Igarashi et al., 2016). Yet it does not imply that they will be useless for practical purposes. In this eye-tracking experiment, children were instructed to sit still and not to move. Thus, we tested this measure under conditions that should have made it difficult to find an effect at all. If students do not receive the instruction “not to move”, mean shift of distance to the screen and fluctuation of distance to the screen might show stronger effects.

The cognitive task in this study was to read multimedia materials, which is one of the ubiquitous learning scenarios in the classroom. According to the previous studies (Gyselinck et al., 2000, 2002; Kruley et al., 1994), the visuospatial sketchpad is involved in the integrative processing: perceptive analysis and storage of pictorial information and matching information in texts and pictures. Previous research has demonstrated that spatial cognitive tasks interfere more with the regulation of postural control than non-spatial cognitive tasks (Chen et al., 2018; Fuhrman et al., 2015; Kerr et al., 1985; VanderVelde, Woollacott & Shumway-Cook, 2005). The comprehension task in the current study is much more complicated, as it involves a multimodal process: verbal processing and pictorial processing (Paivio, 1986; Mayer, 2009; Schnotz & Bannert, 2003). Nevertheless, we still observed the effect of cognitive task difficulty on the distance parameters.

Distance to the screen showed a significant interaction of reading condition and quintiles. Readers moved closer to the screen over the course of processing the multimedia material to find the answer to the question, which had been presented upfront. Whereas distance to the screen varied less over the time quintiles when participants processed question and material after having had a chance to process the multimedia material without a question being posed at first. In the question-after-material condition, readers should already have engaged in initial mental model construction before Quintile 1 and now engage in adaptive mental model specification in light of the question now made available (cf. Zhao et al., 2020). Apparently, this is not accompanied by moving closer to the screen. Yet, such a dynamic movement is observed in the question-with-material condition. As the question is presented first, from Quintile 1 onwards readers could deal with the multimedia material from the perspective offered by the question. Yet, although reading can be selective, some initial mental model construction is still required before the adaptive mental model specification can take place. Given the hints that encountering task-relevant segments of the material can lead to a shorter head-to-screen distance (Kaakinen & Hyönä, 2014), the reduction of distance over time might reflect that (after initial mental model construction) readers in later quintiles become more and more likely to find and identify task-relevant segments of the multimedia material.

One can argue that the head-to-screen distance can be moderated by other factors, such as prior knowledge, vision (wearing no glasses vs. near-sighted glasses vs. far-sighted glasses), state of puberty or interest. These factors differ always individually as learners differ from each other. Hence, these factors cannot be easily controlled by the instructor, when s/he designs the lessons or assignments. However, the task difficulty is relatively easy to control. In the current study, we produced the questions at different levels based on the text-picture requirements (cf. Wainer, 1992). By using a within-subjects design with a large sample size, in which each child was working on (1) easy questions (2) medium questions (3) difficult questions, it was possible for us to counterbalance the inter-individual differences. The factors regarding the individual differences could be considered as constituting individually different base lines for head-to-screen distance, from which an individual deviates according to his or her experienced task difficulty.

Whether the results of the present study can be generalized to other tasks or to other samples remains to be investigated. As children’s cognitive systems are still developing, young adults may have less detrimental dual-task effects while performing postural task concurrently with a cognitive task (e.g., less body sway in Rankin et al., 2000). Due to the decline in execution and cognitive functions, old adults (66 - 84 years) tended to have larger body sway than young adults (19 - 30 years) under demanding cognitive load (Stelzel et al., 2017). Further research should examine whether the regulation of distance to the screen may be a relevant measure in other age groups as well. As the medium difficulty questions in this study in some comparisons did not differ from easy questions or difficult questions, it might be of special interest to study whether and to what extent the task difficulty with clear differences affects the regulation of distance to the screen. Furthermore, while there are high performance instruments to track posture parameters (e.g., Nintendo Wii), it is relevant to explore the power of simpler indicators which might soon be easily available in interfaces to interact with electronic media. This study employed the eye tracker to track the distance to the screen. Yet, smartphones or tablets can also track the distance to the screen via front camera (cf. Kaltwasser et al., 2017; Roig-Maimó et al., 2018). Future research should test the sensitivity of distance to the screen with mobile phones or other devices.

Tentative evidence gave the first glimpse that distance measures might become useful indicators of those learners that are encountering difficulties in self-regulated multimedia learning. With the increase of task difficulty, children cannot manage to maintain the constant distance to the screen well. The fluctuation of head-to-screen distance seems to be the most promising measure, as it not only can be significantly influenced by the task difficulty but also can predict the future response accuracy. This study sheds light also on educational implications. It may be better to allow children to sit freely when they perform difficult tasks on a computer screen (see also, Igarashi et al., 2016; Langhanns & Müller, 2018; Reilly et al., 2008). Tracking distance to the screen thus might be useful to scaffold self-regulated learning in the long run. With an increasing fluctuation of head-to-screen distance, the computerized learning systems can assess the level of user’s knowledge, detect the current high cognitive load and can give adaptive assistance to facilitate deeper learning. An alternative method is to employ adaptive testing (cf. Wainer et al., 1990) with tailored tasks from a range of difficulty corresponding to their level of proximal development. Nevertheless, it is important to note that the distance to the screen can be individually different, in terms of prior knowledge or vision, etc. Here we only considered variability of distance to the screen within persons. Further research should focus more on the effect of individual differences. Keypoints

This study is part of the BITE Project on text-picture integration funded by the German Research Foundation (Grant No: SCHN 665/3-1, SCHN 665/6-1, SCHN 665/6-2) within the Special Research Program ‘Competence models for assessing individual learning results and for balancing of educational processes’. We thank Robert Szwarc for making the graphs. We thank University of Hagen for support in publishing this work.

Atkinson, R. C., & Shiffrin, R. M. (1968). Human memory: A

proposed system and its control processes. In R. J. Sternberg, K.

W. Spence, & J. T. Spence (Eds.), The psychology of

learning and motivation: Advances in research and theory

(Vol. 2, pp. 89–195). Academic Press.

Baddeley, A. (1986). Working memory. Oxford University

Press.

Balaban, C. D., Cohn, J., Redfern, M. S., Prinkey, J., Stripling,

R., & Hoffer, M. (2004). Postural control as a probe for

cognitive state: Exploiting human information processing to

enhance performance. International Journal of Human-Computer

Interaction, 17 (2), 275–286.

https://doi.org/10.1207/s15327590ijhc1702_9

Bayer, M., Sommer, W., & Schacht, A. (2012). Font size

matters—Emotion and attention in cortical responses to written

words. PLOS ONE, 7(5), e36042.

https://doi.org/10.1371/journal.pone.0036042

Blanchard, Y., Carey, S., Coffey, J., Cohen, A., Harris, T.,

Michlik, S., & Pellecchia, G. L. (2005). The influence of

concurrent cognitive tasks on postural sway in children. Pediatric

Physical Therapy, 17(3), 189–193.

https://doi.org/10.1097/01.pep.0000176578.57147.5d

Bonnet, C. T., Szaffarczyk, S., & Baudry, S. (2017).

Functional synergy between postural and visual behaviors when

performing a difficult precise visual task in upright stance. Cognitive

Science, 41(6), 1675–1693.

https://doi.org/10.1111/cogs.12420

Chen, Y., Yu, Y., Niu, R., & Liu, Y. (2018). Selective effects

of postural control on spatial vs. nonspatial working memory: A

functional near-infrared spectral imaging study. Frontiers in

Human Neuroscience, 12, 243.

https://doi.org/10.3389/fnhum.2018.00243

Chong, R. K., Mills, B., Dailey, L.-, Lane, E., Smith, S., &

Lee, H. (2010). Specific interference between a cognitive task and

sensory organization for stance balance control in healthy young

adults: Visuospatial effects. Neuropsychologia, 48(9),

2709–2718. https://doi.org/10.1016/j.neuropsychologia.2010.05.018

Debue, N., & van de Leemput, C. (2014). What does germane load

mean? An empirical contribution to the cognitive load theory. Frontiers

in Psychology, 5, 1099.

https://doi.org/10.3389/fpsyg.2014.01099

Donath, L., Roth, R., Lichtenstein, E., Elliot, C., Zahner, L.,

& Faude, O. (2015). Jeopardizing Christmas: Why spoiled kids

and a tight schedule could make Santa Claus fall? Gait &

Posture, 41(3), 745–749.

https://doi.org/10.1016/j.gaitpost.2014.12.010

Doumas, M., Morsanyi, K., & Young, W. R. (2018). Cognitively

and socially induced stress affects postural control. Experimental

Brain Research, 236(1), 305–314.

https://doi.org/10.1007/s00221-017-5128-8

Faul, F., Erdfelder, E., Buchner, A., & Lang, A. G. (2009).

Statistical power analyses using G*Power 3.1: Tests for

correlation and regression analyses. Behaviour Research

Methods, 41(4), 1149–1160.

https://doi.org/10.3758/BRM.41.4.1149

Fritz, J., Fischer, R., & Dreisbach, G. (2015). The influence

of negative stimulus features on conflict adaption: Evidence from

fluency of processing. Frontiers in Psychology, 6,

185. https://doi.org/10.3389/fpsyg.2015.00185

Fuhrman, S. I., Redfern, M. S., Jennings, J. R., & Furman, J.

M. (2015). Interference between postural control and spatial vs

non-spatial auditory reaction time tasks in older adults.Journal

of Vestibular Research: Equilibrium & Orientation, 25(2),

47–55. https://doi.org/10.3233/VES-150546

Garcia-Barrios, V., Gütl, C., Preis, A. M., Andrews, K., Pivec,

M., Mödritscher, F., & Trummer, C. (2004). AdELE: A framework

for adaptive e-learning through eye tracking. I-Know’04,

609–616.

Gaschler, R., Zhao, F., Röttger, E., Panzer, S., & Haider, H.

(2019). More than hitting the correct key quickly: Spatial

variability in touch screen response location under multitasking

in the serial reaction time task. Experimental Psychology,

66(3), 207–220. https://doi.org/10.1027/1618-3169/a000446

Gentner, D. (1989). The mechanisms of analogical learning. In S.

Vosniadou & A. Ortony (Eds.), Similarity and analogical

reasoning (pp. 199–241). Cambridge University Press.

Gyselinck, V., Cornoldi, C., Dubois, V., De Beni, R., &

Ehrlich, M.-F. (2002). Visuospatial memory and phonological loop

in learning from multimedia. Applied Cognitive Psychology,

16(6), 665–685. https://doi.org/10.1002/acp.823

Gyselinck, V., Ehrlich, M.-F., Cornoldi, C., De Beni, R., &

Dubois, V. (2000). Visuospatial working memory in learning from

multimedia systems. Journal of Computer Assisted Learning,

16(2), 166–176.

https://doi.org/10.1046/j.1365-2729.2000.00128.x

Huestegge, L., & Koch, I. (2014). When two actions are easier

than one: How inhibitory control demands affect response

processing. Acta Psychologica, 151, 230–236.

https://doi.org/10.1016/j.actpsy.2014.07.001

Hyönä, J., & Niemi, P. (1990). Eye movements during repeated

reading of a text. Acta Psychologica, 73(3),

259–280. https://doi.org/10.1016/0001-6918(90)90026-C

Igarashi, G., Karashima, C., & Hoshiyama, M. (2016). Effect of

cognitive load on seating posture in children. Occupational

Therapy International, 23(1), 48–56.

https://doi.org/10.1002/oti.1405

Johnson-Laird, P. N. (1983). Mental models: Towards a

cognitive science of language, inference, and consciousness.

Oxford University Press.

Kaakinen, J. K., Ballenghein, U., Tissier, G., & Baccino, T.

(2018). Fluctuation in cognitive engagement during reading:

Evidence from concurrent recordings of postural and eye movements.

Journal of Experimental Psychology: Learning, Memory, and

Cognition , 44(10), 1671–1677.

https://doi.org/10.1037/xlm0000539

Kaakinen, J. K., & Hyönä, J. (2014). Task relevance induces

momentary changes in the functional visual field during reading. Psychological

Science, 25(2), 626–632.

https://doi.org/10.1177/0956797613512332

Kaltwasser, L., Moore, K., Weinreich, A., & Sommer, W. (2017).

The influence of emotion type, social value orientation and

processing focus on approach-avoidance tendencies to negative

dynamic facial expressions. Motivation and Emotion,

41(4), 532–544. https://doi.org/10.1007/s11031-017-9624-8

Kerr, B., Condon, S. M., & McDonald, L. A. (1985). Cognitive

spatial processing and the regulation of posture. Journal of

Experimental Psychology: Human Perception and Performance

, 11(5), 617–622.

https://doi.org/10.1037/0096-1523.11.5.617

Kintsch, W., & van Dijk, T. A. (1978). Toward a model of text

comprehension and production. Psychological Review,

85(5), 363–394.

https://doi.org/10.1037/0033-295X.85.5.363

Knauff, M., & Johnson-Laird, P. N. (2002). Visual imagery can

impede reasoning. Memory & Cognition, 30(3),

363–371. https://doi.org/10.3758/BF03194937

Kruley, P., Sciama, S. C., & Glenberg, A. M. (1994). On-line

processing of textual illustrations in the visuospatial sketchpad:

Evidence from dual-task studies. Memory & Cognition,

22(3), 261–272. https://doi.org/10.3758/BF03200853

Lajoie, Y., Teasdale, N., Bard, C., & Fleury, M. (1993).

Attentional demands for static and dynamic equilibrium. Experimental

Brain Research, 97(1), 139–144.

https://doi.org/10.1007/BF00228824

Langhanns, C., & Müller, H. (2018). Effects of trying ‘not to

move’ instruction on cortical load and concurrent cognitive

performance. Psychological Research, 82(1),

167–176. https://doi.org/10.1007/s00426-017-0928-9

Lindner, M. A., Eitel, A., Strobel, B., & Köller, O. (2017).

Identifying processes underlying the multimedia effect in testing:

An eye-movement analysis. Learning and Instruction, 47,

91–102. https://doi.org/10.1016/j.learninstruc.2016.10.007

Mayer, R. E. (2009). Multimedia learning. Cambridge

University Press.

Maylor, E. A., & Wing, A. M. (1996). Age differences in

postural stability are increased by additional cognitive demands.

The Journals of Gerontology Series B: Psychological Sciences

and Social Sciences , 51(3), 143–154.

https://doi.org/10.1093/geronb/51B.3.P143

McCrudden, M. T., & Schraw, G. (2007). Relevance and

goal-focusing in text processing. Educational Psychology

Review, 19, 113–139.

https://doi.org/10.1007/s10648-006-9010-7

O’Sullivan, P. B., Dankaerts, W., Burnett, A. F., Farrell, G. T.,

Jefford, E., Naylor, C. S., & O’Sullivan, K. J. (2006). Effect

of different upright sitting postures on spinal-pelvic curvature

and trunk muscle activation in a pain-free population. Spine

(Phila Pa 1976), 31(19), E707-712.

https://doi.org/10.1097/01.brs.0000234735.98075.50

O’Sullivan, P. B., Grahamslaw, K. M., Kendell, M., Lapenskie, S.

C., Möller, N. E., & Richards, K. V. (2002). The effect of

different standing and sitting postures on trunk muscle activity

in a pain-free population. Spine (Phila Pa 1976), 27(11),

1238–1244. https://doi.org/10.1097/00007632-200206010-00019

Paivio, A. (1986). Mental representations: A dual coding

approach. Oxford University Press, Clarendon Press.

Patel, P. J., & Bhatt, T. (2015). Attentional demands of

perturbation evoked compensatory stepping responses: Examining

cognitive-motor interference to large magnitude forward

perturbations. Journal of Motor Behavior, 47(3),

201–210. https://doi.org/10.1080/00222895.2014.971700

Pellecchia, G. L. (2003). Postural sway increases with attentional

demands of concurrent cognitive task. Gait & Posture,

18(1), 29–34.

https://doi.org/10.1016/S0966-6362(02)00138-8

Qiu, J., & Helbig, R. (2012). Body posture as an indicator of

workload in mental work. Human Factors, 54(4),

626–635. https://doi.org/10.1177/0018720812437275

Rankin, J. K., Woollacott, M. H., Shumway-Cook, A., & Brown,

L. A. (2000). Cognitive influence on postural stability: A

neuromuscular analysis in young and older adults. The

Journals of Gerontology: Series A: Biological Sciences and

Medical Sciences , 55(3), M112–M119.

https://doi.org/10.1093/gerona/55.3.M112

Reilly, D. S., van Donkelaar, P., Saavedra, S., & Woollacott,

M. H. (2008). Interaction between the development of postural

control and the executive function of attention. Journal of

Motor Behavior, 40(2), 90–102.

https://doi.org/10.3200/JMBR.40.2.90-102

Rival, C., Ceyte, H., & Olivier, I. (2005). Developmental

changes of static standing balance in children. Neuroscience

Letters, 376(2), 133–136.

https://doi.org/10.1016/j.neulet.2004.11.042

Roig-Maimó, M. F., MacKenzie, I. S., Manresa-Yee, C., &

Varona, J. (2018). Head-tracking interfaces on mobile devices:

Evaluation using Fitts’ law and a new multi-directional corner

task for small displays. International Journal of

Human-Computer Studies, 112, 1–15.

https://doi.org/10.1016/j.ijhcs.2017.12.003

Sakaguchi, M., Taguchi, K., Miyashita, Y., & Katsuno, S.

(1994). Changes with aging in head and center of foot pressure

sway in children.International Journal of Pediatric

Otorhinolaryngology, 29(2), 101–109.

https://doi.org/10.1016/0165-5876(94)90089-2

Schaefer, S., Schellenbach, M., Lindenberger, U., &

Woollacott, M. H. (2015). Walking in high-risk settings: Do older

adults still prioritize gait when distracted by a cognitive task?

Experimental Brain Research, 233(1), 79–88.

https://doi.org/10.1007/s00221-014-4093-8

Scheiter, K., Schubert, C., Schüler, A., Schmidt, H., Zimmermann,

G., Wassermann, B., Krebs, M.-C., & Eder, T. (2019). Adaptive

multimedia: Using gaze-contingent instructional guidance to

provide personalized processing support. Computers &

Education, 139, 31–47.

https://doi.org/10.1016/j.compedu.2019.05.005

Schmid, M., Conforto, S., Lopez, L., & D’Alessio, T. (2007).

Cognitive load affects postural control in children. Experimental

Brain Research, 179(3), 375–385.

https://doi.org/10.1007/s00221-006-0795-x

Schmid, M., Conforto, S., Lopez, L., Renzi, P., & D’Alessio,

T. (2005). The development of postural strategies in children: A

factorial design study. Journal of Neuro Engineering and

Rehabilitation, 2 (1), 29.

https://doi.org/10.1186/1743-0003-2-29

Schnotz, W., & Bannert, M. (2003). Construction and

interference in learning from multiple representation. Learning

and Instruction, 13(2003), 141–156.

https://doi.org/10.1016/S0959-4752(02)00017-8

Schnotz, W., & Wagner, I. (2018). Construction and elaboration

of mental models through strategic conjoint processing of text and

pictures. Journal of Educational Psychology, 110(6),

850–863. https://doi.org/10.1037/edu0000246

Sims, V. K., & Hegarty, M. (1997). Mental animation in the

visuospatial sketchpad: Evidence from dual-task studies. Memory

& Cognition , 25(3), 321–332.

https://doi.org/10.3758/BF03211288

Stelmach, G. E., Populin, L., & Müller, F. (1990). Postural

muscle onset and voluntary movement in the elderly. Neuroscience

Letters, 117(1), 188–193.

https://doi.org/10.1016/0304-3940(90)90142-V

Stelzel, C., Schauenburg, G., Rapp, M. A., Heinzel, S., &

Granacher, U. (2017). Age-related interference between the

selection of input-output modality mappings and postural control -

A pilot study. Frontiers in Psychology, 8,

613. https://doi.org/10.3389/fpsyg.2017.00613

Sweller, J. (1988). Cognitive load during problem solving: Effects

on learning. Cognitive Science, 12(2),

257–285. https://doi.org/10.1207/s15516709cog1202_4

Theeuwes, J., & Belopolsky, A. (2010). Top-down and bottom-up

control of visual selection controversies and debate. In V.

Coltheart, Tutorials in Visual Cognition. Psychology

Press, Taylor & Francis Group.

VanderVelde, T. J., Woollacott, M. H., & Shumway-Cook, A.

(2005). Selective utilization of spatial working memory resources

during stance posture. NeuroReport, 16(7),

773–777. https://doi.org/10.1097/00001756-200505120-00023

Wainer, H. (1992). Understanding graphs and tables.

Educational Testing Service.

Wainer, H., Dorans, N. J., Green, B. F., Steinberg, L., Flaugher,

R., Mislevy, R. J., & Thissen, D. (1990). Computerized

adaptive testing: A primer. Lawrence Erlbaum Associates

Publisher.

Wickens, C. D. (2002). Multiple resources and performance

prediction. Theoretical Issues in Ergonomics Science, 3(2),

159–177. https://doi.org/10.1080/14639220210123806

Wickens, C. D., Vidulich, M., & Sandry-Garza, D. (1984).

Principles of S-C-R compatibility with spatial and verbal tasks:

The role of display-control location and voice-interactive

display-control interfacing. Human Factors, 26(5),

533–543. https://doi.org/10.1177/001872088402600505

Woollacott, M. H., & Shumway-Cook, A. (2002). Attention and

the control of posture and gait: A review of an emerging area of

research. Gait & Posture, 16(1), 1–14.

https://doi.org/10.1016/S0966-6362(01)00156-4

Woollacott, M. H., & VanderVelde, T. J. (2008). Non-visual

spatial tasks reveal increased interactions with stance postural

control. Brain Research, 1208, 95–102.

https://doi.org/10.1016/j.brainres.2008.03.005

Zhao, F., Schnotz, W., Wagner, I., & Gaschler, R. (2014). Eye

tracking indicators of reading approaches in text-picture

comprehension. Frontline Learning Research, 2(4),

46–66. https://doi.org/10.14786/flr.v2i4.98

Zhao, F., Schnotz, W., Wagner, I., & Gaschler, R. (2020).

Texts and pictures serve different functions in conjoint mental

model construction and adaptation. Memory & Cognition,

48(1), 69–82. https://doi.org/10.3758/s13421-019-00962-0