Frontline Learning Research Vol.7 No. 3 (2019) 27

- 63

ISSN 2295-3159

aArcada University of Applied

Sciences Helsinki; University of Tampere, Finland.

Article received 26 September 2018 / revised 4 April / accepted 5 July / available online 18 July

With the introduction of internet as a source of information, parents have observed youngsters’ tendency to prefer internet as a source, and almost a reluctance to learn in advance since “you can look it up when needed”. Questions arise, such as ‘Are these phenomena symptoms of changing beliefs about knowledge and learning? Is it at all possible to learn on a deeper level simply by looking up the basic facts, without memorizing them?’ Within an existing line of investigation, epistemic beliefs have been described as a set of dimensions. Although internet-based information and internet as a source of information have been acknowledged, studies so far have not explored how dealing with internet-based information relates to other epistemic beliefs dimensions. To capture how users view internet-based information per se but also in relation to other epistemic beliefs, I suggest three new dimensions, out of which the most crucial is labelled ‘Internet reliance’. Offloading memory using memory aids is not a new phenomenon but the ‘Internet reliance’ dimension indicates that especially internet-reliant users may be confusing external information with personal knowledge, with all the risks it may entail. Besides including beliefs about learning, this study also challenges earlier assumptions regarding uncorrelated dimensions.

Keywords: epistemic beliefs; internet; constructivism; outsourcing knowledge; factor analysis

During the last decade, most people will have heard youngsters respond to a question with the acronyms JFGI or GIYF (“Just F…g Google It” and “Google Is Your Friend”, see https://en.wiktionary.org/wiki/JFGI). For most adults, expecting a proper answer, this response was surprising, puzzling and perhaps even offensive. The response is, however, an illustration of the gap between the parent generation’s “You should know this”-view on knowledge, and the young generation’s stance “I’ll look it up when I need it”.

With the introduction of easy and ubiquitous access to information over internet, the attitude of looking it up when one needs it became common, especially among frequent internet-users. Given that the young generation born after the mid 1980’s grew up surrounded by information and communications technologies (hereafter ICT), the interesting question is, has the easy and ubiquitous access to information actually influenced their view on knowledge, knowing and learning?

During the first decade of this millennium, the so-called Digital Natives of the Net generation were supposed to hold characteristics such as being constantly on-line, being ICT savvy and being at home on social media (e.g. Prensky, 2001; Siemens, 2005). Indeed, the youngsters differ from their parent generation in that they lack a personal history of the time before mobile phones, internet and search engines (Gunter, Rowlands, & Nicholas, 2009, p. 3), not to mention smart phones. Large parts of the youngsters within this cohort embrace the opportunities provided by ICT, e.g. preferring internet-based information instead of books (cf. OSF, 2010; Purcell et al., 2012, p. 4). Still, several studies have pointed out the heterogeneity within the generation (cf. Jones & Hosein, 2010; van den Beemt, Akkerman, & Simons, 2011). Also among the students participating in the present study, large differences occurred regarding both self-reported ICT and media use patterns and performance-based ICT skills (Ståhl, 2017).

Within education, the easy and ubiquitous access to information raises concerns about how and upon which information students build their knowledge, since they seem to accept the veracity of on-line information too easily, and lack the skills of thinking critically and synthesizing the information found on-line (Purcell et al., 2012, pp. 26-27). The vast popularity of search engines (with covert operating logics) in combination with users’ lacking critique has considerable epistemic implications, as demonstrated in the theoretical work and the studies cited below (section Knowledge and information in the internet era). The present study will build upon the above studies that confirm the existence of the JFGI phenomenon.

Existing self-report instruments for measuring epistemic beliefs are not capable of capturing signs indicating internet-induced changes in the views of knowledge and learning. Especially the Digital Natives’ ways of dealing with knowledge and learning have been described in literature (some examples in section Hypothesized dimensions) but so far, this topic has been scarcely approached from an epistemic point of view. This topic calls for empirical investigation, which requires instruments.

This paper will describe how the existing dimensions (Structure and Certainty of knowledge, Innate learning ability and Omniscient authority) are extended with the new dimensions Constructivist approach, Internet reliance and Learning by dialogue. Creating a validated instrument requires more than one round and therefore, the aim of this endeavour is an initial exploration of how new dimensions might contribute to a better description of how today’s higher education learners in an internet-saturated context view knowledge and learning.

Contemporary research regarding epistemic beliefs largely subscribes to epistemic beliefs being limited to beliefs about knowledge, and not about learning. The present study will deviate from this view by exploring also views about learning. Doing so, this study contributes to the discussion by looking beyond the knowledge dimensions of epistemic beliefs, and by describing the connection between beliefs about knowledge and beliefs about learning, a connection that is necessary to illuminate consequences for educational practice.

To provide a rationale for the present study, this section will

1) review some studies regarding knowledge, information and epistemic beliefs in the internet era,

2) review epistemic beliefs as a research area,

3) review some arguments regarding learning as part of epistemic beliefs, and

4) discuss why domain specificity and justification of knowledge where omitted from the study at this stage.

George Siemens tried to grasp the impact of technology and the decreasing half-life of knowledge by introducing connectivism as a new learning theory for the digital age. He suggested supplementing the existing forms of propositional (knowing-that) and procedural (knowing-how) knowledge with ‘knowing-where’ and ‘knowing-who’, i.e. an understanding of where to find knowledge. According to Siemens, since we cannot experience everything or store all knowledge ourselves, we store knowledge in other people and in non-human appliances. The key is connectedness, and the knowledge is distributed (Downes, 2007, p. 84; Siemens, 2005). Connectivism was apparently neither a learning nor a knowledge theory but rather a pedagogical view but still, the connectivist ideas resemble the concept of distributed mind, which suggests that knowledge can reside in people, in tools, and in cultural settings, and that the potential lies in the combination of those (cf. Shaffer & Clinton, 2006).

The results of an experimental study by Sparrow and her team suggest that internet has become a kind of extension to our individual memory system. If the net is available, we do not bother to memorize the information itself but rather, where to find the information, as when youngsters respond: “JFGI!” We are becoming increasingly symbiotic with our computer-based tools, growing into interconnected systems that remember less by knowing information than by knowing where to find the information. (Sparrow, Liu, & Wegner, 2011)

The concept of the extended mind (Clark & Chalmers, 1998) suggests that human cognition may extend beyond the brain and include elements from social and technological environments (cf. Siemens, 2005). Applying the concept to the context of the web opens up for the concept of the web-extended mind, which includes the idea that “… the informational and technological elements of the web can, at least on occasion, constitute part of the material supervenience base for (at least some of) a human agent’s mental states and processes” (Smart, 2012, p. 451). The mere existence of the web does not automatically make it part of a person’s extended mind but in addition, three criteria need to be met: the availability criterion, the trust criterion and the accessibility criterion (Clark & Chalmers, 1998; Smart, 2012). Considering the development, that has taken place within the web and smart phone contexts since Smart wrote his article, we have reason to suspect that users often regard these criteria as met, and too easily incorporate on-line information into their personal body of knowledge: due to internet capable smartphones, the availability and the accessibility criteria are easily met. The problematic part is the trust criterion: on-line information is too easily endorsed and too rarely subject to critical scrutiny (Purcell, Brenner, & Rainie, 2012, pp. 10-11). This is especially problematic since e.g. Google made personalized search in 2009 the default option for all users (Simpson, 2012, p. 437).

The personalization of search results performed by search engines means that the results are tailored to what will probably interest the enquirer, and that those hits that do not fit the enquirer’s profile are ranked down or even omitted. According to Thomas Simpson (2012), the epistemic significance of search engines lies in their acting as surrogate experts, firstly as they assist the enquirer in finding sources and secondly as they orient the enquirer to supposedly relevant sources of information (the expert role also discussed by Fisher, Goddu, & Keil, 2015, below). The problematic aspect here is that by filtering and ranking the results, the search engine implies a judgment about what is relevant, without the enquirer having neither insight into, nor the possibility to influence the criteria for judgement. As Simpson (2012, p. 427) puts it: “… objectivity may require telling enquirers what they do not want to hear, or are not immediately interested in” (my emphasis) (also see Hinman, 2008). Therefore, Simpson regards personalization as an actual threat to objectivity. By leaving out relevant voices, the tailored search results contribute to an epistemic bubble, and the operating logics of search engines combined with the enquirers’ ignorance increases the risk of the enquirer being trapped in an epistemic bubble or even an echo chamber (Nguyen, 2018).

The complexity of the objectivity problem is illustrated by the findings of Purcell, Brenner, & Rainie (2012): although a majority in their study disapproved search engines collecting information about their searches, 23-29% thought that using the information for personalizing search results was a positive feature (pp. 19-21). Further, on average two thirds of the participants believed that the information provided by search engines was fair and unbiased: the younger, the more they relied on search engines’ objectivity (pp. 10-11). A further aspect, illustrating the objectivity problem, is the ritualization described by Bhatt & MacKenzie (2019), i.e. students’ information seeking practices being largely motivated by adhering to what they call the rules of the game. These rules can be appropriate in the beginning to induce students to the knowledge creation practices of the discipline but when detained too long, they may inhibit the development of students’ information seeking skills and trust in their own capacity to consider the justification of the information they find.

In an experimental study, Fisher et al. (2015) highlight the risks embedded in ubiquitous access to information, which may blur the boundaries between personal knowledge and external information, thus creating an illusion of possessing personal understanding. Further, their results suggest that some individuals tend to regard internet as an expert regardless of domain. These results pose a true challenge for education at all levels, at least if we consider personal and integrated knowledge, instead of loose bits of information, as the objective of education and learning.

Miller & Record (2013) discuss the covert operating logics of search engines and their epistemic implications using a framework building upon a responsibilist account of justified belief. According to this, an epistemically responsible enquirer will aim at having true beliefs and will therefore perform all the necessary actions to collect sufficient evidence to support his belief, such as checking a broad enough range of e.g. web pages and comparing them to other types of sources (cf. Bråten, Brandmo, & Kammerer, 2018). There are, however, three cases where the enquirer may fail to acquire justification for his belief: 1) the enquirer neglects performing a proper search, 2) the enquirer performs a proper enquiry, but the results do not support his belief or 3) the activity to justify his belief is not possible, e.g. due to lack or impracticability of a technology. Assuming that an enquirer is literate enough to avoid the first case, he can still fail as in cases 2 and 3. In cases of internet searches the problem is that, due to the covert search logics, the enquirer may not even know that he has failed. He may believe that he has performed a proper search but, due to the search engine’s filtering and ranking, the results may not provide the full picture of facts required to justify or rule out the belief. Furthermore, due to the covert operating logic, it is impracticable (case #3) for the enquirer to assess the quality of the set of sources provided by the search engine.

As shown above, the past decades’ technological development has induced changes in how individuals acquire information, and blurred the boundaries between personal knowledge and external information. The problem is not about using external memory aids or systems for offloading information (Säljö, 2012). As Säljö explains, man started developing external symbolic storages and artificial memory systems thousands of years ago, and memory aids such as Otto’s physical notebook (Smart, 2012) or address books in smartphones are everyday tools used to offload information from our memory. However, there is a risk that (especially young) users not only offload information but perhaps even outsource cognitive processes, since they may lack the epistemic competencies and practices required in this new information ecology (cf. Bhatt & MacKenzie, 2019; Fisher et al., 2015; Säljö, 2012; Sparrow et al., 2011).

To provide a rationale for the approach of this study, the following sections will briefly review 1) epistemic beliefs as a research area, 2) how epistemic beliefs may relate to learning and 3) dimensions and tools for measuring epistemic beliefs. These sections also aim at explaining how this study was delimited and why some aspects, albeit frequently discussed in other studies, were not included in this study.

William G. Perry’s (1970) study of college students’ ideas regarding Source and Certainty of knowledge is commonly regarded as the starting point for research on epistemic beliefs or personal epistemology Over the past decades, epistemic beliefs have been conceptualized in different ways (cf. Schraw, 2013). Some researchers conceive them as broad and developing stage-like. Other researchers conceive them as a set of more or less independent dimensions expressing beliefs about knowledge and learning, Marlene Schommer (1990; 1993) being the first in this line of research. The term ‘epistemic beliefs’ will be used here since the study will focus on the respondents’ (implicit and unconscious) views of knowledge, not their theories of knowledge or epistemology (cf. Kitchener, 2002; Hofer, 2008, p. 5).

The works during the 1990ies of Marlene Schommer (1990; 1993, later Schommer-Aikins) and Barbara K. Hofer and Paul R. Pintrich (1997) in developing research around epistemological theories are important to acknowledge. During the first decade of this century, research around epistemic beliefs increased and extended from Perry’s original North American, white, elite, male college students context to other age groups and geographical and cultural contexts. For extensive overviews, please see the works by Hofer & Pintrich (2002), Niessen, Vermunt, Abma, Widdershoven, & van der Vleuten (2004), deBacker, Crowson, Beesley, Thoma, & Hestevold (2008) and Khine (2008). Further, the more recent works by Schraw (2013) Greene, Sandoval, & Bråten (2016), Bernholt, Gruber, & Moschner (2017) and Knight et al. (2017), out of which the four latter where not yet available at the time for planning this study.

Domain-specificity and domain differences have been issues throughout the years. The initial assumption, that one’s epistemic beliefs are general across domains, has been questioned and instead, it has been suggested that one can hold different epistemic beliefs, depending on the field of knowledge one is dealing with (Muis, Bendixen, & Haerle, 2006). The longitudinal study by Trautwein & Lüdtke (2007), albeit focusing on the certainty dimension only, confirmed the hard-soft difference but also that students aiming at certain college programmes differed regarding their beliefs already at the end of their upper secondary education. In their large review, Muis et al. (2006) noted that empirical research had been presented in support for both domain general and for domain specific epistemic beliefs respectively, and that they may co-exist and possibly interact. The suggestions by Muis et al. were strongly supported by both Hofer (2006) and Alexander (2006). To conclude, I acknowledge the co-existence of and interaction between domain-general and domain-specific epistemic beliefs. The question regarding domain-generality vs. domain-specificity was, however, not the focus of the present study.

The development of self-report instruments for measuring epistemic beliefs has encountered several challenges. In his review article, Schraw notes that there has been disagreement about the underlying conceptual structure, and replications of exploratory factor analyses (hereafter EFA and CFA will be used for exploratory and confirmatory factor analysis, respectively) have often failed. Common problems have been that items load in an unexpected manner often resulting in less factors or another factor structure than anticipated in the underlying conceptual model, too few items loading per factor and the resulting model showing a low explanation score. (Schraw, 2013)

In the present study, I will subscribe to the line of research that considers the concept of epistemic beliefs as multidimensional. Assuming that hitherto described dimension sets are not sufficient to describe epistemic beliefs in the new information ecology, I attempt to introduce some new dimensions. The aim of testing new dimensions required starting on a general level and therefore, the questionnaire items (except for the internet-related items) did not refer to any specific discipline or context (section Instrument construction).

Alongside with motivation and cognitive styles, the concept of epistemic beliefs is an important factor affecting learning and study success. Hofer & Pintrich (1997) called for more research to understand how students’ epistemic beliefs may influence learning performance. Further, they suggested that the type of learning tasks may shape the students’ epistemic beliefs, as shown later by Kienhues, Bromme, & Stahl (2008).

Brownlee, Walker, Lennox, Exley, & Pearce (2009) approached the topic of epistemic beliefs qualitatively, and their results highlight that first-year students may hold subjectivist or objectivist core beliefs that may decrease their ability to engage in critical thinking, required in higher education. Walker et al. (2009) also approached first-year students and identified some students being at risk of having difficulties in higher education due to their naïve beliefs about learning and knowing.

There is also evidence suggesting cultural differences. Zhang & Watkins (2001) observed that Chinese students’ cognitive-developmental patterns were the opposite of the patterns observed in the U.S. sample. Their results also indicate that epistemic beliefs are not static but developing (cf. Kienhues et al., 2008). Further, Hofer (2008, pp. 11-12) observed differences between Japanese and US college students such that US students had more sophisticated beliefs about the factors describing certainty, simplicity, source and justification of knowledge.

Education is moving towards methods of teaching and learning that often involve using Internet-based resources (e.g. the flipped classroom, Knewton, 2011). These methods require more self-regulation from part of the student, and e.g. Bråten (2008, pp. 369-370) highlights the risk that students with naïve epistemic beliefs may tend to over-reliance towards internet-based resources.

Regarding the changes in teaching methods, it is worth noting that the teachers’ choices of pedagogical activities and learning settings are also influenced, perhaps unconsciously, by the teacher’s own epistemic beliefs (Palmer & Marra, 2008, p. 337). An overall awareness regarding epistemic beliefs is called for among teachers at all levels of education. An interesting attempt to support this awareness is the theoretical model between epistemic beliefs and self-regulation suggested by Muis (2007), where epistemic beliefs facilitate self-regulation and play a crucial role in all four phases (Task definition, Goal setting, Enactment and Evaluation) of the learning process.

An example from a constructivist education context (PBL) is the study by Otting, Zwaal, Tempelaar, & Gijselaers (2010), where the results showed a connection between conceptions of expert knowledge and traditional conceptions of teaching and learning on one hand, and on the other hand a connection between learning effort and a constructivist conception of teaching and learning.

The examples above illustrate that there is much going on within the educational context, most importantly that education is moving from being teacher- and subject-centred towards being more student- and learning-centred. The development of the technological structures around ICT is increasingly beyond control of the educational system. However, learning analytics is an area where education is actively applying ICT: the core characteristic is the generation of high-resolution data about various types of [learning] actions (Knight, Wise, & Chen, 2017), and applying knowledge from multidisciplinary perspectives such as business intelligence, web analytics and data mining for analysis purposes (Ferguson, 2012). Thus, learning analytics can generate real-time individual and group performance information with potential to support teachers’ decision-making (Knight, Wise, & Chen, 2017). Knight et al. (2017) present a novel approach as they explore how students’ epistemic beliefs predict e.g. students search behaviour (traced using learning analytics methods). Their results did not show a convincing predictive value, whereas the results by Pieschl, Stallmann, & Bromme (2014) were a bit more encouraging. This issue is further commented in section Internet-specific epistemic beliefs.

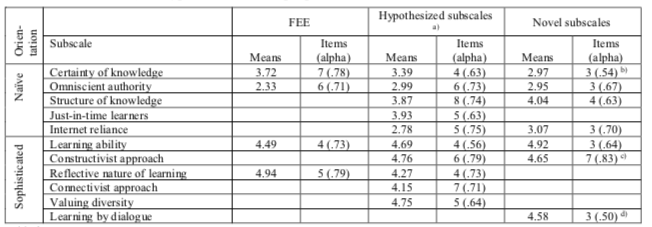

Within the line of investigation that regards epistemic beliefs as multidimensional, self-report instruments have been developed to capture the dimensions of epistemic beliefs. In her original 63-item Schommer Epistemological Questionnaire (SEQ), Schommer (1990; 1998) suggested the dimensions Simple knowledge, Certain knowledge, Innate ability, Quick learning and Omniscient authority. Using EFA, Schommer managed to extract four but not the Omniscient authority dimension. Thus, the dimensions described views on both knowledge and learning. Several authors (e.g. Hofer & Pintrich, 1997) have criticized Schommer for not performing factor analysis on the 63 original items but using 12 subscale scores (packages) based on those items, as variables. Still, Schommer’s questionnaire has been the starting point for a large part of later development regarding questionnaire-based instruments (for an overview, please see Niessen et al., 2004), out of which the following instruments, besides the SEQ, were used as reference in the present study:

- Wood & Kardasch (2002) developed the Epistemological Beliefs Survey (EBS) containing 38 items, out of which 32 stemmed from or resembled items in the SEQ, and covering two SEQ dimensions.

- Schraw, Bendixen, & Dunkle (2002) developed the Epistemic Beliefs Inventory (EBI) containing 28 items, out of which 17 stemmed from or resembled items in the SEQ. EBI reflected the same dimensions as the SEQ.

- Moschner, Gruber, & Studienstiftungsarbeitsgruppe EPI (2005) developed the 43-item Fragebogen zur Erfassung epistemischer Überzeugungen (Questionnaire for capturing epistemic beliefs, hereafter FEE) containing nine items from SEQ. FEE included the three SEQ dimensions Certainty of knowledge, Learning ability and Omniscient authority. Additionally, the FEE proposed five new dimensions labelled Social aspects of knowledge, Value of knowledge, Culture related aspects of knowledge, Gender related approaches to knowledge and Reflective nature of knowledge.

2.4.1 Knowledge, knowing and learning

The discussion whether epistemic beliefs should be limited to beliefs about knowledge and knowing, or whether beliefs about learning should be included, has been ongoing throughout the decades. Hofer & Pintrich (1997) recommended excluding beliefs about learning for the sake of clarity of the concept of epistemic beliefs. Instead, they retained Certainty and Simplicity of knowledge (describing nature of knowledge) and proposed the dimensions Source of knowledge and Justification for knowing to describe the nature of knowing.

Schommer introduced an embedded systemic model that included Beliefs about Ways of Knowing, interplaying with Beliefs about Knowledge and Beliefs about Learning, i.e. beliefs about knowledge and learning as separate constructs but within the same system (Schommer-Aikins, 2004).

Sandoval (2005) warned for conflation of the concepts. Although beliefs about knowledge will probably influence one’s beliefs about learning, Sandoval proposed that they should be investigated as separate constructs. In a comment to the discussion, Elby (2009) suggested that it is too early to decide and therefore, views on learning should at least for the time being be included in the concept of epistemic beliefs for further empirical and theoretical development.

For the present study, data were collected regarding beliefs about both knowledge and learning and consequently, the analyses include both aspects. This approach is also supported by previous research presented in the section Epistemic beliefs and learning.

2.4.2 Internet-specific epistemic beliefs

The point of departure for this study, the tendency not to look up information until needed and to rely on internet-based sources, is close to the research regarding internet-specific epistemic beliefs by Bråten, Strømsø and their teams. In 2005, they developed the Internet Specific Epistemic Questionnaire (ISEQ: Bråten, Strømsø, & Samuelstuen, 2005), which was based on the four dimensions described by Hofer & Pintrich (1997) and thus omitting learning dimensions. In performing EFA, Bråten et al. used Maximum Likelihood (hereafter ML) as extraction method together with an oblique rotation method but did, however, extract only two factors. They labelled the first one General Internet Epistemology, which included beliefs concerning the certainty and simplicity of Internet-based knowledge, as well as beliefs concerning the Internet as a source of knowledge, i.e. three dimensions in one factor. The second factor was labelled Justification for Knowing and described whether internet-based knowledge claims could be accepted without critical evaluation, or should they be critically evaluated using multiple sources, reasoning and prior knowledge.

All eighteen ISEQ items referred to internet and thus, all questions connected explicitly and exclusively to the internet context. Further, when reading the ISEQ General Internet Epistemology items it seems obvious that they do not actually reflect the certainty or structure of knowledge (cf. corresponding items in Table 1) but rather, they mainly express the coverage and availability of information on the internet. Thus, the ISEQ seems to leave questions open about the respondent’s beliefs regarding certainty and simplicity of knowledge in general, about the beliefs regarding other sources of knowledge, and how these beliefs relate to each other; unanswered questions constituting a research gap.

In a subsequent study, Bråten & Strømsø (2006) applied parts of the SEQ (Schommer, 1990), but not the ISEQ, to explore the connection between epistemic beliefs and internet-based search and communication activities. It turned out e.g. that students who believed in quick learning tend to overlook the importance of critically evaluating web-based resources. In another study, based on 17 out of 18 items in the ISEQ item set, the authors extracted only three factors (using ML and Direct Oblimin): Certainty and source of knowledge, Justification for knowing and Structure of knowledge (Strømsø & Bråten, 2010).

The ISEQ has also been applied in other contexts and for other purposes: Karimi (2014), exploring the connection between internet-specific epistemic beliefs and grammar achievement, extracted the same three factors as Strømsø & Bråten (2010), although with Varimax rotation. Chiu, Liang, & Tsai (2013) used a Chinese translation of the ISEQ, and applied an EFA method (apparently with oblique rotation) but upon only twelve items. These authors did, however, not extract ISEQ dimensions as described by Bråten et al. (2005) but instead, the four dimensions originally suggested by Hofer & Pintrich (1997), i.e. Certainty, Simplicity and Source of knowledge and Justification for knowing, but using items specifically denoting an internet-based context. Kammerer & Gerjets (2012) applied ISEQ to categorize users for comparison, but they only used eight items attributed to the ISEQ-dimension Certainty and Source of knowledge and thus, did not test the factor structure proposed in the original ISEQ.

The study by Knight et al. (2017) exemplifies a research approach linking epistemic beliefs with log data analytics. They used the ISEQ in an extensive study to explore whether the two-factor ISEQ scores could predict e.g. trustworthiness ratings of internet-based sources or traced search behaviour. According to their results, the factor scores did not predict search behaviour, and they had only small predictive value for trustworthiness rating. The approach by Knight et al. is interesting and relevant but raises the question: Could the connections to search behaviour have turned out differently had they not used the two-factor ISEQ, where the General Internet Epistemology factor contains a mix of Certainty, Structure and Source of knowledge? E.g. the results by Pieschl et al. (2014), indicate that students’ epistemic beliefs influence how they approach complex tasks.

To conclude, epistemic beliefs have been explored also in relation to internet-based information, but the picture is disparate. The studies referred to above, as well as many other studies, suffer from the problems addressed by Schraw (2013). The studies published prior to the present data collection (Bråten et al., 2005; Bråten & Strømsø, 2006; Strømsø & Bråten, 2010) focused on beliefs about internet-based information without actually relating these beliefs to beliefs about knowledge based on other information sources. The studies referred to above also leave the question open, whether internet should be regarded as an authority or knowledge source, or a specific context (cf. Grossnickle Peterson, Alexander, & List, 2017, p. 262).

2.4.3 Justification for knowing

Hofer & Pintrich (1997) introduced Justification for knowing as a dimension, which was later supported by several researchers. Both Alexander (2006) and Greene, Azevedo, & Torney-Purta (2008) have noted that this dimension is least developed, and that exploring justification is more challenging than exploring other dimensions. This assumption seems well founded considering the complexity of the justification aspect, e.g. in terms of the responsibilist account of justified belief suggested by Miller & Record (2013) (see section Personal knowledge, external information).

Greene et al. (2008) point out two aspects that are part of the challenge in investigating the justification dimension. First, considering the number of different kinds of justification identified in philosophy, justification as part of the epistemic beliefs model will probably require to be described by multiple factors rather than one single factor. Further, Greene et al. suggest that a person needs to have a sophisticated ontology of a domain before issues of justification, such as critical thinking, become relevant. This seems congruent both with Bloom’s original cognitive process dimensions and especially with the knowledge dimensions described later by Krathwohl (2002): issues of justification are probably far more relevant when applying, analysing or evaluating conceptual knowledge than when recalling facts.

The above suggestion by Greene et al. comes to expression in a recent study by Bråten, Brandmo & Kammerer (2018), where they delimit the context to internet and the domain to that of educational topics within teacher education. Their study focuses solely on the justification dimension, approaching it as a three-dimensional concept including justification by authority, justification by multiple sources and justification against prior personal knowledge and reasoning. As a result, they present the validated Internet-Specific Epistemic Justification Inventory (ISEJ).

Against the background of the considerations referred above, and the fact that epistemic beliefs in the new information ecology was totally uncharted territory, it seemed appropriate to leave the Justification dimension outside this investigation. Hence, the FEE instrument (Moschner et al., 2005) was chosen as a starting point (see section Instrument construction).

Capturing all dimensions of epistemic beliefs (or cognition, cf. Greene et al., 2008) while at the same time adding and testing new dimensions would be both adventurous and beyond this study. Therefore, while acknowledging that epistemic beliefs consist of multiple dimensions developing over time, this study adopts a narrow focus on capturing a snapshot of the participants’ current epistemic beliefs, including beliefs in internet-based information. Thus, the Justification dimension as well as the topics regarding subject-, domain-, discipline-, culture- or gender-specificity of epistemic beliefs (see e.g. DeBacker et al., 2008) are beyond the scope of this study.

The approach of this study is openly explorative in testing whether it is possible, overall, to extend the existing instruments and their dimension sets with new dimensions of epistemic beliefs, and specifically to capture such ways of relating to knowledge that have become common among frequent internet users during the past decades. Further, this study will explore the relation between existing epistemic dimensions and those describing internet-based knowledge and knowing.

Apart from ISEQ (Bråten et al., 2005), this study does not aim to explore how individuals justify internet-based information, but rather to explore whether and to which extent individuals rely on and prefer internet-based information sources, and how this preference relates to other epistemic dimensions. The investigation is framed in a single research question:

(How) can the set of epistemic beliefs dimensions be extended so that it also expresses a googling attitude?

The research question is openly phrased since, although research on epistemic beliefs has been going on for some time, the proposed dimensions are on uncharted territory. For the sake of clarity, I will use the term original dimensions for those dimensions described in or stemming from Schommer’s SEQ (1990). Hypothesized dimensions will be used to denote suggested dimensions until their existence has been confirmed, after which they are denoted as novel dimensions or scales in the proposed model, which is the endpoint of the present study.

By way of introduction to this section, I provide a rough outline for instrument construction and data collection. The first version of the instrument was created for the data collection in August 2011. The instrument was evaluated so that a revised version was used for the second data collection in August 2012, which resulted in the material being reported here. The usability and validity of data from 2012 is commented in the discussion section. It needs to be noted, that after the current data were collected, new studies describing further development have been published. The present instrument was, naturally, based on instruments that were published and available prior to 2012.

The FEE questionnaire developed by Moschner et al. (2005) combined experiences from previous instruments and also contained some potentially interesting extensions. Therefore, the FEE was taken as point of departure for constructing the first version of the on-line survey called ‘Me and my knowledge’. A replication of using the FEE-specific items was performed on the first data set collected in 2011 (reported in Ståhl & Mildén, 2017). Due to unsuccessful replication, the instrument was revised prior to the 2012 data collection: the five new dimensions suggested in FEE were omitted, Structure of knowledge items were included as well as some other items, based on item level analysis. In addition, some items describing the hypothesized subscales were reversely phrased. Table 1 shows the entire instrument, item descriptives and item associations before and after analyses.

Since Swedish and English are the working languages of the university, the questionnaire was set up in both languages. To ensure comprehensibility, both Swedish-speaking domestic and English-speaking international students were involved in read-aloud sessions during instrument construction.

An important aspect of the cultural adaptation of the questionnaire was rephrasing the questions into first person present tense, as suggested e.g. by Kitchener (2002) and Schommer-Aikins (2004, p. 23). The main motive was to ensure a first-person perspective: the phrasing should clearly signal that the researchers were interested in knowing what the student herself thinks, not what she thinks that people in general think, or what is socially desirable to think about a topic. During the read-aloud sessions, the students provided valuable feedback acknowledging the need for cultural adaptation and inducing some further rephrasing. Overall, the students’ feedback supported the choice to use direct and active wording. The items were consistently generic (not domain- or discipline-specific), and the instructions did in no way refer to relating the responses to any specific subject, academic field or context (cf. Wood & Kardash, 2002, p. 244; Muis et al., 2006, p. 25).

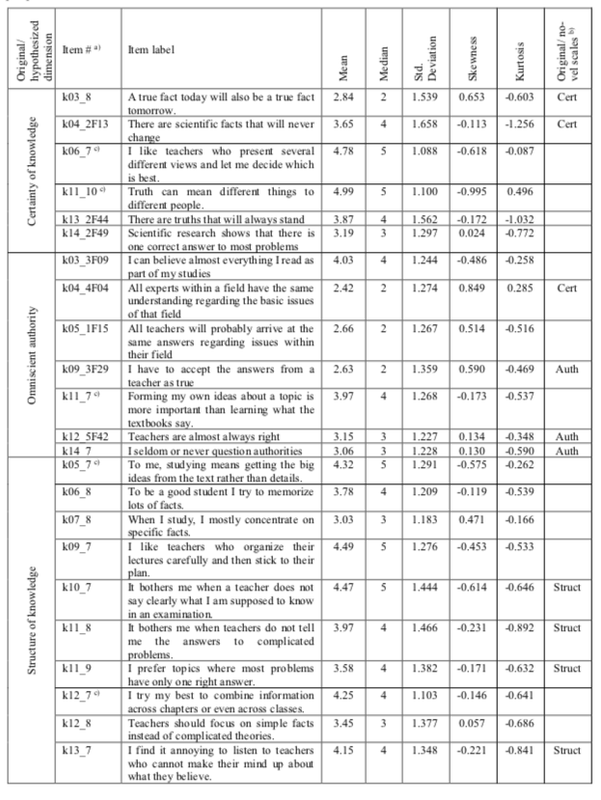

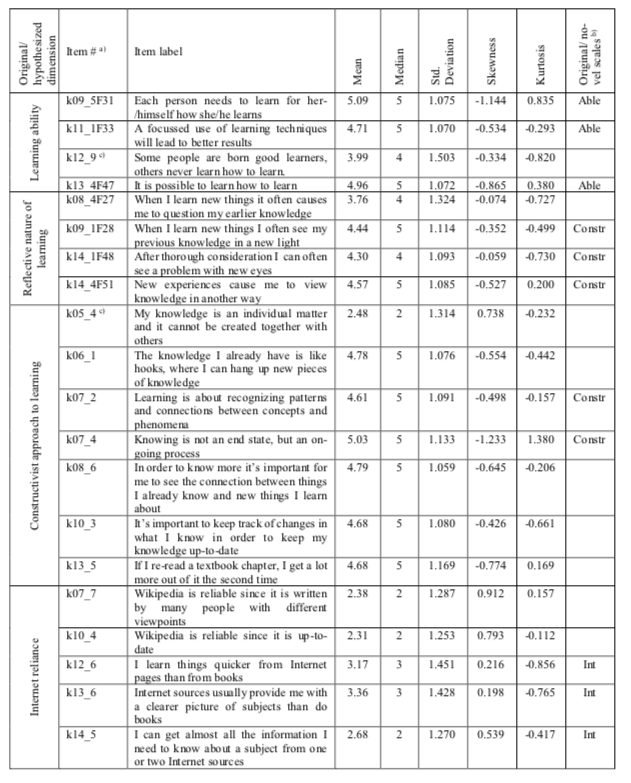

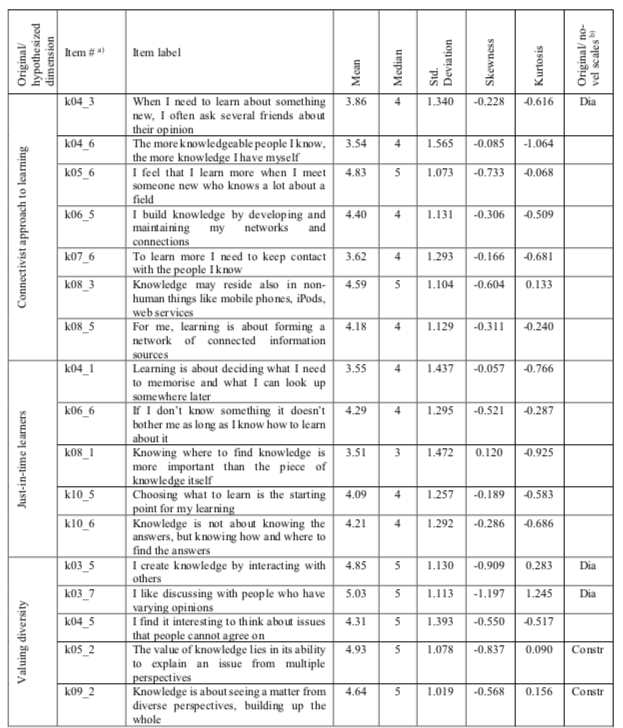

Table 1

Questionnaire items in original and hypothesized dimensions, including item descriptives and item use in the proposed model

Table footnotes

a) The number after 'k' refers to the page number (03-14) in the web questionnaire. The number after 'F' refers to the original FEE numbering.

b) Able - Learning ability; Auth - Omniscient authority; Cert - Certainty of knowledge; Constr - Constructivist approach; Dia - Learning by dialogue; Int - Internet reliance; Struct - Structure of knowledge

c) Item phrasing is reverse compared to other items in the same dimension.

3.1.1 Previously established dimensions

The FEE questionnaire (Moschner et al., 2005) included the original SEQ dimensions Certainty of knowledge, Omniscient authority and Learning ability. Unfortunately, the dimension Structure (or Simplicity) of knowledge was excluded from the FEE but was included in the 2012 survey being reported here (Table 1).

Justification of knowledge should undoubtedly be a part of the epistemic beliefs dimension set. However, the new students (see section Participants and data collection) that were involved as informants could hardly be expected to possess a sophisticated ontology of the domain they were just entering to study (cf. Greene et al., 2008). Based upon this, upon previously presented considerations (section Justification for knowing) and upon the scope of the study, the Justification dimension was omitted at this stage.

3.1.2 Hypothesized dimensions

Out of the five new dimensions suggested in the FEE, only Reflective nature of knowledge was used in this study, and the items associated with it were rephrased to reflect Reflective nature of learning. This dimension deals with the learning aspect and was intended to express a reflective stance towards new knowledge.

The debate regarding Digital Natives did not produce an actual definition for Digital Natives but instead, researchers published different descriptions about how the (supposedly) digital generation acted and behaved (cf. Ståhl, 2017). Therefore, the dimensions described below were constructed with a starting point in descriptions regarding attitudes towards knowledge and learning, as reported in various studies. The instrument also set out to test whether the suggested attributes could be identified within this sample.

The descriptions of connectivism (Downes, 2007; Siemens, 2005; 2006, pp. 31, 91) together with Anderson & Balsamo (2008, p. 244) stating that "They treat their affiliation networks as informal Delphi groups” have contributed to the items proposed to describe a Connectivist approach to learning (hereafter the short forms Connectivist approach and Constructivist approach will be used).

A Constructivist approach to learning has yet not been suggested in previous instruments, although some items in the dimension Knowledge Construction and Modification suggested by Wood & Kardash (2002, p. 250) and the dimension Reflective nature of knowledge suggested by Moschner et al. (2005) point in this direction. The writings of Siemens (2006, pp. 6, 20, 31) have also provided input to the items proposed to describe a constructivist approach.

Anderson & Balsamo (2008, p. 244) described the young generation as ”…knowing and being confident where to find information once they need it”. Siemens (2006, p. 31) described deciding what to memorise and choosing what to learn as characteristics in connectivist learning, inspiring the construction of items describing the hypothesized dimension Just-in-time learning.

Reliance on internet is an integral part of the googling mind-set. At the time of planning this research the ISEQ had been introduced (Bråten et al., 2005) but as mentioned above (section Internet-specific epistemic beliefs), the ISEQ items focussed exclusively on internet-based information. Thus, the five items concerning internet-based knowledge in the present instrument were generated from literature regarding the so called Digital Natives and the Net Generation (Prensky, 2001; Siemens, 2005; Anderson & Balsamo, 2008), and their preference for internet sources instead of printed sources (cf. Head & Eisenberg, 2010; Purcell et al., 2012, p. 33). The items where phrased to express how the googling mind-set reflects a reliance in that any information you need can always be found on internet and accordingly, the dimension was labelled Internet reliance.

Siemens (2006, pp. 16, 31, 56, 117) described Valuing diversity as a central trait in connectivism, which requires interaction (Downes, 2007, p. 78) and also involves exposing oneself to and valuing different opinions, all contributing to the individual learning process. This trait, requiring “… the widest possible spectrum of points of view…” (Siemens, 2006, p. 16), can be regarded an expression for both a general scholarly approach and also the epistemic development from realist over absolutist and multiplist to evaluativist understanding (Kuhn & Weinstock, 2002, p. 124).

The present instrument includes four previously described and six hypothesized dimensions, altogether 60 items (Table 1).

The study was part of a university development project with the objective of collecting information about the new students’ mind-sets to develop teaching and learning practices. The university’s Board on Ethics approved the project research plan, including procedures for data collection, analysis and reporting.

Data were collected among all new students in August 2011 and 2012 (N = 476/440). Since epistemic beliefs can change through intervention (cf. Kienhues et al., 2008), it was crucial to get a “snapshot” of the students’ epistemic beliefs by collecting data during the very first week of the semester, before the students were exposed to study subjects or pedagogical influences at the university. Data collection was organised during compulsory and scheduled ICT Level Test sessions, where students first completed another survey called ‘ICT, media and me’, then the compulsory ICT Driving License Level Tests and finally the survey ‘Me and my knowledge’.

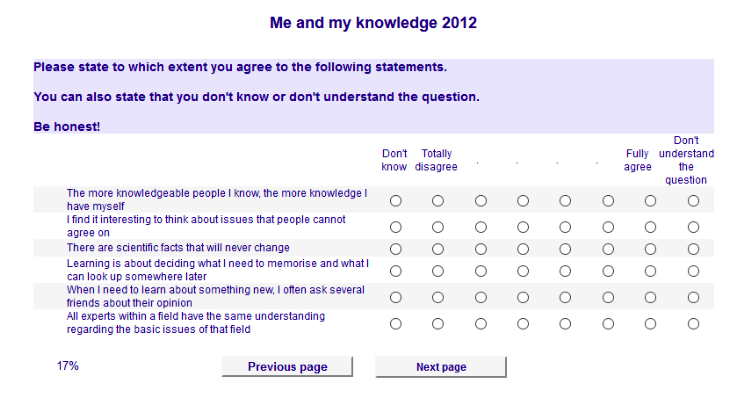

Figure 1 On-line questionnaire screenshot

The students were introduced to the objectives of the project, and informed orally and in writing that although the ICT level tests were compulsory, the surveys were voluntary and did not include any financial or other incentives. Due to the survey being an operationalization of the university’s statutory obligation to continuously develop its education, informed consent was registered following a simplified procedure. The students were informed that by (performing the action of) filling in the questionnaire, they express their consent for the data being used for the purposes described in the information sheet and in the Description of the Scientific Research Data File as required in the legislation concerning personal data in research (Personal Data Act, 1999). Accordingly, the students had the opportunity to withdraw their permission by contacting the researcher by a given date, after which the data set was anonymized. The students were also introduced into the functionality of the questionnaires and informed that support was provided if needed.

The survey was presented in an on-line questionnaire using a 6-point Likert-type response format (Figure 1). When applying the 63-item SEQ, Wood & Kardash (2002, p. 244) received student comments indicating respondents’ difficulties in understanding certain items. Although some researchers (e.g. Martin, 2005, p. 728) discourage the use of ‘don’t know’ options, the scale in this questionnaire was supplemented with two non-substantial options, ‘don’t know’ and ‘don’t understand’. This is partly supported by Muis et al. (2006, p. 25), noting that it has not been empirically studied what individuals actually think as they fill out questionnaires. Providing both options was especially important when introducing new items, since these options provided information regarding comprehensibility, potentially valuable when considering items to exclude (cf. Finch, Immekus, & French, 2016, p. 144). Further, the non-substantial options were placed on both sides of the substantial options in order not to distort the visual midpoint of the Likert-type response format (cf. Tourangeau, Couper, & Conrad, 2004).

In survey presentation, it was necessary to prevent fatigue effect and satisficing (cf. Cape, 2010), and any effect where question context or order might influence question interpretation (cf. Martin, 2005, p. 726; Tourangeau et al., 2004). Therefore, a progress indicator was included and the items were distributed over twelve pages containing four to six items each, which also improved readability. Further, to prevent inter-item influence, each subscale’s items were distributed over different pages (e.g. the page in Figure 1 containing items from five subscales) and the survey service was set to randomise item order within each page.

The present study is based on data collected in 2012, where 371 students chose to complete the survey ‘Me and my knowledge’. Only those cases containing substantial responses to more than 70% of the items where retained for further analyses (n = 348). The 23 excluded cases had responded only to first-page items and were therefore regarded as dropouts. The complete data set with 371 cases exhibited missing values increasing from 4.2% up to 11.7% on page level, whereas this trend in the 348-case subsample developed from 2.5% to 7.0%. This, together with the dropouts, indicates that most respondents who started the survey also completed it, and that an actual fatigue effect was avoided. On item level, the portion of missing values ranged from 1.4% to 10.1%, where the two Certainty of knowledge items k13_2F44 and k14_2F49 (Table 1) showed the highest portions of missing values, mostly ‘don’t know’ responses. The highest ‘don’t understand’ portions occurred for three items representing the dimensions Just-in-time learning (k04_1), Constructivist approach (k07_2) and Connectivist approach (k08_5).

Since the questionnaire applied a Likert-type response format producing data on an ordinal scale, it is not meaningful to analyse distribution or assess normality on item level (cf. Carifio & Perla, 2007) but instead, analysis of the actual scales is postponed to the discussion section. For those calling for an item level analysis it can be mentioned that for each item, the response value ranged over the whole scale (1..6). The items showed a standard deviation between 1.02 and 1.66, a skewness between -1.23 and 0.91 and a kurtosis between -1.26 and 1.38. The criterion of the skewness and kurtosis value being within the range ±1 was met regarding 57 and 55 items, respectively. The Shapiro-Wilks test suggested non-normal distribution, whereas the Kolmogorov-Smirnov test suggested normal distribution throughout all items. A visual inspection of histograms, normal Q-Q plots and box plots showed that the items were approximately normally distributed. For the items showing skewness or kurtosis outside the ±1 range, the deviation was minor and further, the sample size was large enough to reduce a possible detrimental effect (cf. Hair, Black, Babin, & Anderson, 2010). Based on the aforementioned criteria, the items were considered as normally distributed.

The current 348-case subsample holds students from twelve degree programmes, both domestic and international students (86.8% / 13.2%), and a gender distribution holding 66% female students. The age average was 21.7 with 91% being born in 1986-1995. For this study, sample demographics should be reviewed in relation to access to internet resources.

Internet and publicly available search engines were launched already in the mid 1990’ies and during the following ten years, search engine use was established (http://www.searchenginehistory.com/). 2011-2012 were the very years when internet services, previously available via computers, became truly ubiquitous due to 3G/4G-connected smartphones becoming everyday tools, and Finnish net operators offering affordable 3G/4G-subscriptions including generous mobile data. The mobile phone prevalence within both cohorts was close to 100%. Smartphone as a concept was not yet established and thus, the corresponding survey item was phrased “My mobile phone is connected to the internet”. From 2011 to 2012, the portion of users across the cohorts having an internet-connected phone increased among domestic students from 48.7 to 81.3% and among international students from 60.7 to 90.6%, within the total cohorts from 50.2 to 82.5%. This corresponds well with the national statistics, according to which 53% of those aged 16-24 had a smartphone in the spring of 2011 (OSF, 2011). At the time of data collection, the respondents had been exposed to computers, mobile phones and internet for in average 12, 10 and 9 years respectively. To conclude, the sample can be regarded a rather typical Net Generation cohort.

Fabrigar, Wegener, MacCallum, & Strahan (1999) argue that Principal Component analysis is not a true method of factor analysis. They recommend the use of Maximum Likelihood, as later supported by Osborne (2014, p. 9) and Finch et al. (2016, p. 131). Thus, the analysis procedure starts with an EFA with ML as extraction method, followed by a validation procedure including EFA and CFA on split halves of the sample (Fabrigar et al., 1999; Fokkema & Greiff, 2017; Knight et al., 2017; Leal-Soto & Ferrer-Urbina, 2017; Osborne, 2014, pp. 6, 119-120; Tang, 2010). For all statistical tests, a significance level of .05 was used and in EFA and CFA procedures, the absolute loading value .32 was used as the threshold when assessing item (non-)loadings and cross-loadings (cf. Finch et al., 2016, p. 143). In table and diagram presentations, loadings <.32 are generally not displayed although during EFA procedures, low loadings were not suppressed since that may cause loss of valuable information (such as item k14_5, Table 3). The SPSS software package (SPSS, 2016b) was used for EFA procedures, and the CFA procedures were performed using the Amos software package (SPSS, 2016a).

To build analysis on true data, missing data values were not imputed, since any kind of replaced or imputed values are, after all, only estimates. This choice was made at the cost of listwise deletion reducing the number of cases in EFA, and missing values thwarting the use of modification indices to support refinement in CFA.

In this section, the results are presented together with analyses, since some results inform the subsequent steps. The reasoning behind e.g. item disposal, number of factors and factor labelling will be presented in conjunction with EFA on the complete item set.

4.1.1 Replicating original dimensions

For replication purposes, EFA was first performed on the 27 items associated with the original dimensions. Dysfunctional items (zero, low and cross-loading) were stepwise discarded (cf. Finch et al., 2016, pp. 143-144). A model based on 18 items showed good fit indices and was interpreted as a successful replication (despite ML extraction and Promax rotation).

4.1.2 Emerging dimensions

The 60-item questionnaire contained 33 items that were associated with six hypothesized dimensions: Reflective nature of learning, Connectivist approach, Just-in-time learning, Constructivist approach, Internet reliance and Valuing diversity (Table 1). Seeking inspiration from Bråten et al. (2005) and Trautwein & Lüdtke (2007) who analysed only one or two factors, this item subset was initially factor analysed separately in order to identify dysfunctional items.

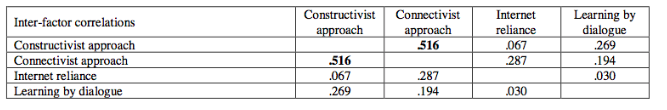

The EFA on the hypothesized dimensions was truly exploratory, including different rotation methods, varying the number of extracted factors and stepwise reduction of dysfunctional items (Finch et al., 2016, pp. 143-144; Osborne, 2014, pp. 17, 30-33). Using ML, Promax rotation and Listwise deletion, the EFA resulted in a four-factor model based on 23 items (n=191). The model showed good fit indices (Eigenvalues 6.35 .. 1.5, 51% of variance explained; KMO=.864, Bartlett's Chi-Square=1397, df=253, Sig.<.000, Goodness-of-fit Test Chi-Square=182.7, df=167, Sig.=.192) and reflected three of the hypothesized dimensions: Constructivist approach, Connectivist approach, Internet reliance and a fourth one, now labelled Learning by dialogue. Each item loaded strongly on one factor without cross-loadings. Throughout the various models, Constructivist approach and Connectivist approach correlated strongly, and several of the other factors correlated weakly with each other (Table 2).

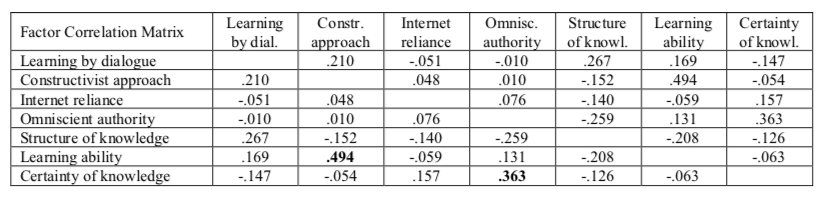

Table 2

Factor correlation matrix, four-factor model based on 23 new items

4.1.3 An extended set of dimensions

Since inter-factor correlation occurred in all the previous analyses, EFA on the complete item set were performed using ML extraction and Promax or Oblimin as oblique rotation methods (cf. Finch et al., 2016, p. 133; Knight et al., 2017; Osborne, 2014, pp. 30-33; Strømsø & Bråten, 2010). The model was stepwise refined by removing low-loading and cross-loading items while simultaneously assessing their conceptual relevance, their communality estimates and their internal consistency within the anticipated scale. During the process, the six reversely phrased items occurring in four dimensions (Table 1) were discarded due to dysfunctionality. Thus, within all the hypothesized dimensions, the items were unidirectional.

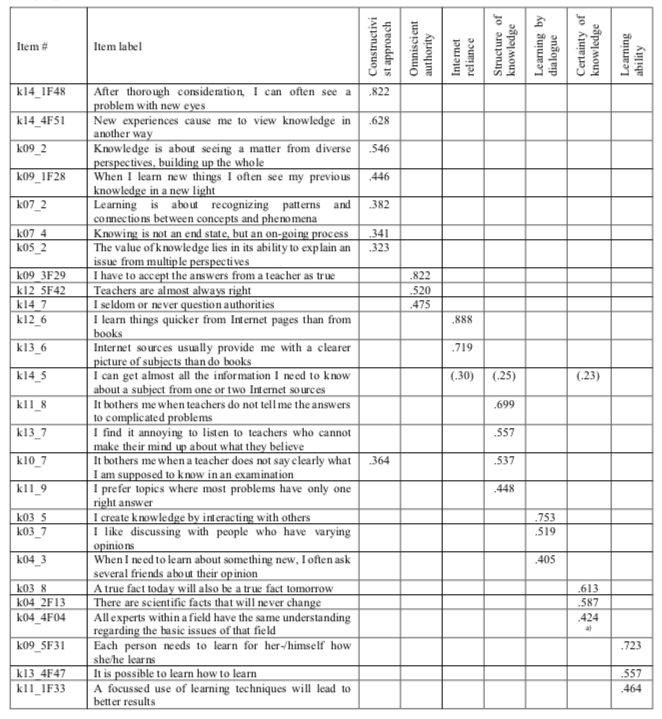

The refinement procedure boiled down to a model with seven factors and 26 items that fit the data reasonably well (Table 3). Both original and hypothesized dimensions appeared distinctly without cross-loadings (except for items k10_7 and k14_5), each dimension loaded on at least 3 items, and 25 out of 26 items loaded (>.32) on the anticipated factor.

Table 3

The proposed EFA model based on 26 items

Table footnotes

ML, Promax rotation converged in 16 iterations; Listwise deletion, n=195, Eigenvalues 4.85 .. 1.01, 59.3% of variance explained;

KMO=.782, Bartlett's Chi-Square=1468, df=325, Sig. <.000, Goodness-of-fit Test Chi-Square=169.8, df=164, Sig.=.361 a) Item loading not consistent with hypothesized dimension but conceptually coherent.

From this model, several items were dropped due to loading weakly or inconsistently with the hypothesized dimension. The retained original items loaded on the same factors as in the EQ, except for item k04_4F04 that was originally associated with Omniscient authority. The current loading on Certainty of knowledge can be regarded as conceptually coherent.

The issues regarding which items to discard, how to decide on the number of factors, and how to label the subscales require some comments. As Osborne (2014, pp. 17-18) notes, EFA is a low-stakes procedure and expressly exploratory. Accordingly, I entered the process with 60 items, a hypothesized underlying conceptual model, and used the statistical package (SPSS, 2016b) to provide suggestions for a factor model. During EFA iterations, the dysfunctional items were eventually revealed and discarded. Besides varying extraction and rotation, the search for an adequate number of factors included extracting factor sets ranging from two factors below up to two factors above the number suggested by the scree plot elbow (Osborne, 2014, p. 18). This method provided valuable information: increasing the number of factors caused related items to split over several factors, whereas reducing factors caused items to pile up on one factor, usually then holding items from dimensions that at the end turned out to correlate. Thus, the search for a factor model included weighing of theory, scree plot, item loadings and communalities, eigenvalues, internal consistencies and conceptual considerations.

During the EFA iterations, the hypothesized dimensions did not turn out quite as anticipated, which is only part of the nature in explorative work (cf. Osborne, 2014, p. 17). In most of the explored models, the five hypothesized dimensions boiled down to three (Table 3). The dimension Learning by dialogue holds items from the suggested dimensions Valuing diversity and Connectivist approach, whereas the dimension Constructivist approach besides its own items also holds items from the hypothesized dimensions Valuing diversity and Reflective nature of learning. All the three items originally associated to Internet reliance consistently loaded on that dimension (Table 1).

Retaining the items k14_5 and k10_7 violates the rule of using only strong, single-loading items and requires commenting. The internal consistency test showed that deleting the item k14_5 entailed a slightly improved alpha value, but at the cost of reducing the factor Internet reliance to only two items. Further, since the item communality value was reasonably good, the connection to Structure of knowledge occurred also in the path diagram, and the CFA indicated that discarding the item impaired fit indices, there was enough support for retaining the item.

As expected, the item k10_7 loaded strongly on the Structure of knowledge dimension, but surprisingly also on Constructivist approach. The item was retained since discarding it would have impaired the Structure of knowledge internal consistency considerably. The split half EFA suggested single-loading on Structure of knowledge, whereas the CFA suggested a connection to Constructivist approach and indicated that discarding the item impaired fit indices.

Three of the original dimensions (except Learning ability) correlated weakly with each other. Further, Constructivist approach correlated strongly (.506) with Learning ability and weakly (.282) with Learning by dialogue.

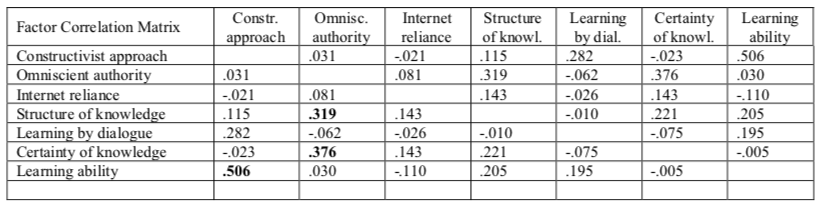

For the purpose of evaluating the stability of the model presented in Table 3, the data set was randomly split into two equal halves A and B, that were subject to EFA and CFA, respectively (cf. Fokkema & Greiff, 2017, p. 401).

4.2.1 Exploratory split half

An EFA was performed with 26 items on the split half A using the same methods as in the initial model. The model arrived at (Table 4) did not show a one-to-one correspondence to the initial model (Table 3) but resembled it strongly, with 22 out of 26 items loading as anticipated. All the hypothesized dimensions were reflected in the seven factors, although two of the Constructivist approach items loaded on the Learning ability factor (a). Further, two items loaded on unexpected factors (b), and the Learning by dialogue item k03_5 caused a Heywood case. Still, the fit indices suggested that the proposed model, appearing almost similar in both Oblimin and Promax rotation, fit also the split data set fairly well.

Table 4

Exploratory Factor Analysis on split half A

Table footnotes

a) Item loading not consistent with hypothesized dimension but conceptually coherent

b) Item loading not consistent with hypothesized dimension, vague conceptual coherence

ML, Oblimin rotation converged in 12 iterations; Listwise deletion n=96, Eigenvalues 5.00 .. 1.06, 62.9% of variance explained;

KMO=.706; Bartlett's Chi-Square=928, df=325, Sig. <.000; Goodness-of-fit Test Chi-Square=179.5, df=164, Sig.=.193

As in the initial model, the Constructivist approach factor correlated strongly with the Learning ability factor (.494) and Omniscient authority correlated with Certainty of knowledge (.363).

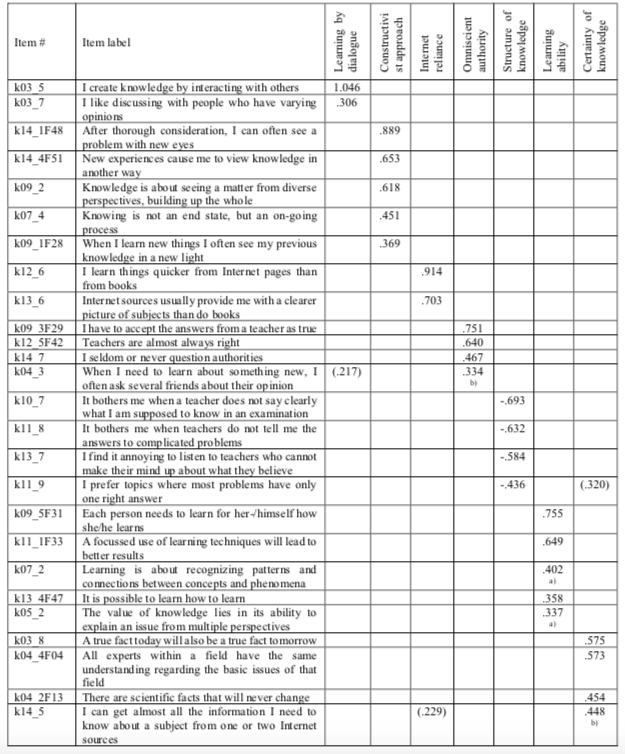

4.2.2 Confirmatory split half

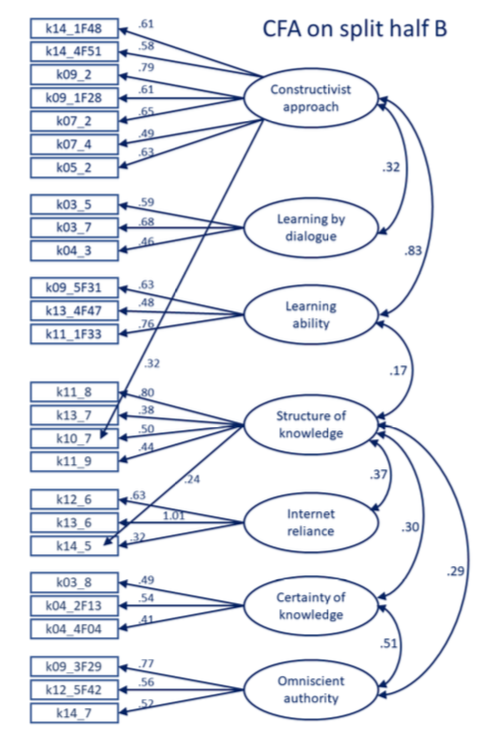

CFA was performed on the same 26 items as the previous EFA but on the split half B (n=174) of the data set. In the first step, conceptually irrelevant and low connections between latent variables were removed, which resulted in an initial model with partly insufficient fit indices. Assessing model fit and choice of cut-off criteria (in brackets) follow the recommendations by Schreiber, Nora, Stage, Barlow, & King (2006) and Hooper, Coughlan, & Mullen (2008).

Since the data set contained empty cells, it was not possible to utilize the feature where the Amos software would provide suggestions for modification (SPSS, 2016a). Instead, model refinement was performed manually, partly following loadings and correlations indicated in the previous EFA models (Tables 3 and 4), and partly by adding and removing connections based on conceptual considerations in an exploratory manner. Thus, some connections between latent variables, although weak, were retained, under the condition that they were conceptually defensible and contributed to improving fit indices. Then again, in some cases conceptually defensible connections had to be discarded if their loading value was low (mainly <.32) and retaining them impaired the fit indices. The procedure resulted in a conceptually defensible path diagram (Figure 2), similar to the EFA models (Tables 3 and 4) and reasonable although not perfect fit indices (Chi-square/df=1.504, RMSEA=.054, TLI=.821, CFI=.853, PCLOSE=.260). The item k13_6 caused a minor Heywood case (1.01), which was accepted since any attempt to manipulate constraints impaired fit indices.

Figure 2. Simplified CFA path diagram based on 26 items and split half B data set (cut-off criteria in brackets). Chi-square/df=1.504 (<2), RMSEA=.54 (<.60), TLI=.821 (≥.95), CFI=.853 (≥.95), PCLOSE=.260 (≥.05).

After the seven dimensions had been identified (Table 3), they appeared stable throughout the succeeding analyses. In some cases, items associated with the dimensions Constructivist approach and Learning ability cross-loaded. This phenomenon is conceptually coherent considering the strong correlation between these factors that, in turn, is possibly due to a latent second-level variable. The internal replications by exploratory and confirmatory factor analyses on randomized split halves provide information speaking in favour of the proposed factor model.

Since the suggested construct holds good or reasonable fit indices and behaves in a consistent manner throughout the different analyses, it can be regarded as holding initial construct validity. Initial meaning here that the present study was only a first attempt to launch the hypothesized dimensions, and further research (with new data and adjusted items) is still required to test the generalizability of the construct (Finch et al., 2016, pp. 127-128). Further testing should also involve a diverse student population with regards to domains and cultural background.

In general, the factors reflect both original and hypothesized dimensions. In all EFA models (Tables 3 and 4) as well as in the CFA path diagram (Figure 2), the highest loading on each factor occurred on one of the anticipated items. Regarding the original dimensions, it turned out that 12 out of the 27 items reflected the anticipated construct and one item loaded differently than in previous studies (see rightmost column in Table 1). Within the hypothesized scales, 13 items where included in the novel scales.

To answer the question if and to which extent the factors actually describe the dimensions, this section presents comments regarding each dimension in the model (Table 3, Figure 2). The original dimensions retained their original labels, and the labelling of the novel dimensions is commented in section Novel dimensions. Choices regarding factor model and number of factors were discussed in section An extended set of dimensions.

5.2.1 Original dimensions

Learning ability

Three of the four items in the Learning ability dimension proved stable across most analyses and models, whereas the item k12_9 was dropped at an early stage. In some EFA models, this factor attracted items from the Constructivist approach dimension, which is consistent with the strong correlation between these dimensions (Table 3, Figure 2).

Omniscient authority

In her earliest studies, Schommer (1990; 1998) reported this dimension as difficult to capture, whereas Schraw et al. (2002, p. 267) and Moschner et al. (2005) were able to identify this dimension. In this sample, the authority dimension manifested clearly in all models, and the items k09_3F29, k12_5F42 and k14_7 loaded consistently on the Omniscient authority factor.

Structure of knowledge

Throughout the analyses, most of the Structure of knowledge items loaded as expected. Item k12_7 often loaded on the Learning ability factor but for this item, a connection to learning is not far-fetched; combining information across sources may express an active stance towards learning, rather than a view of the Structure of knowledge. Thus, this item may be an example of a phrasing containing something that might be called keyword shifting, where the keyword “combining” is perceived differently: some respondents recognize the active learning approach, whereas other see it as an expression for knowledge as bits and pieces that can be combined or kept isolated.

During the refinement process, item k12_7 as well as several other items were discarded, leaving four items to represent this dimension. Looking at the discarded vs. retained items (Table 1) does, however, raise some questions. It seems unfortunate to discard the items k05_7, k06_8, k07_8 and k12_7, since they indeed express a knowledge aspect, i.e. a very clear stance regarding the Structure of knowledge as isolated facts vs. information that can or should be combined into larger entities. Then again, the retained items seem to focus very much on the actions and behaviour from part of the teacher, almost like introducing a teaching aspect to epistemic beliefs, besides the knowledge and learning aspects. Interestingly, five out of the six discarded items (k05_7, k06_8, k07_8, k09_7 and k12_7) stem from the original EQ.

Certainty of knowledge

In most models, items k03_8, k04_2F13 and k13_2F44 loaded as expected on the Certainty of knowledge factor, but often accompanied by items k04_4F04 and k05_1F15, originally associated with Omniscient authority.

The items k04_4F04 and k05_1F15 (discarded) may be examples of items with keyword shifting of another kind. Here, the respondent may pay attention either to the “experts/ teachers” as authorities, or rather focus on “same answers / same understanding”, the latter option connecting more to knowledge being certain. This observation shows similarity to the EQ subset Avoid ambiguity loading on Simple (Structure of) knowledge instead of Certain knowledge (cf. Schommer, 1990; Wood & Kardash, 2002, p. 241). It should be mentioned that the discarded items k13_2F44 and k14_2F49 were the ones to show the highest ‘don't know’ portions.

5.2.2 Novel dimensions

Out of the six hypothesized dimensions, three survived the EFA and CFA iterations. Constructivist approach and Internet reliance were retained and Learning by dialogue was introduced as the third dimension. The crucial question is, whether the novel dimensions are defensible. Do they reflect the constructs, and are the constructs relevant and credible?

Constructivist approach to learning

The novel dimension Constructivist approach to learning did not turn out as anticipated but instead, in the proposed model it holds items also from the hypothesized dimensions Valuing diversity and Reflective nature of learning (Table 1). Most of the seven items loading on this factor in the initial model (Table 3) proved stable throughout the different analyses. In the split half EFA, the items k05_2 and k07_2 loaded on Learning ability, which is both conceptually coherent as well as understandable considering the strong correlation between these dimensions.

To some extent, the Constructivist approach can be regarded as an antithesis to Omniscient authority; a naïve stance on the Omniscient authority dimension would entail a belief that knowledge is handed down by some authority, which implicitly would exclude the possibility of the individual constructing knowledge herself. However, if these dimensions were opposite to each other, they would also correlate negatively, which was not the case.

The explanation may lie therein that the items that were used to operationalize the Omniscient authority dimension mainly focus on how the respondent relates to authorities and to the knowledge handed down by them. The items do not actually provide information about to which extent the respondent thinks it is possible to construct knowledge. Thus, the Constructivist approach dimension can rather be regarded as a supplement to the Omniscient authority dimension and furthermore, whereas the Omniscient authority dimension expresses a knowledge (source) aspect, the Constructivist approach dimension expresses a learning (as construction) aspect.

Learning by dialogue

In the initial EFA (Table 3), this dimension contained items from the hypothesized dimensions Valuing diversity (k03_5, k03_7) and Connectivist approach (k04_3), all with strong loadings. In the EFA on split half A, the item k03_5 caused a Heywood case while both other items loaded weakly and moreover, k04_3 loaded on the Omniscient authority factor, which is conceptually questionable (Table 4). The CFA path diagram on split half B (Figure 2) shows rather weak loadings on this latent variable, but the correlation with Constructivist approach is strong, which is conceptually coherent. The discarded items, especially k04_5 and k08_5, that were suggested to describe Connectivist approach and Valuing diversity, might still be worth testing after rephrasing.

Despite some instability, probably due to low number of cases in the split halves, this dimension can still be defended since it expresses an aspect not expressed in the previous dimensions, namely learning as a social process where the interaction with others, also those representing divergent opinions, is central.

Internet reliance

Internet reliance contains three of the five items originally associated to this dimension. Some items associated to Just-in-time learning might have been associated to this dimension but were discarded due to instability.

The items k12_6 and k13_6 proved stable across the analyses, whereas k14_5 loaded weakly and cross-loaded in the initial model and loaded on Certainty of knowledge in the split half EFA. In the split half CFA, k13_6 caused a Heywood case and k14_5 loaded weakly on this dimension. Adding a connection from Structure of knowledge to k14_5 improved fit indices. The corresponding loading also occurred in the initial EFA model (Table 3).

The fact that ISEQ items (Bråten et al., 2005) were not included to a larger extent may be surprising. However, a closer look shows that the three items included in the present instrument resemble the ISEQ General Internet Epistemology items strongly, and basically cover the same topics.

The items in this dimension were presented from a naïve perspective and were slightly skewed to the right, indicating that the respondents were not quite as convinced of internet as the Digital Natives debate may have suggested.

The question whether the dimensions correlate or not has been an issue throughout the years within this line of investigation. In her first studies, Schommer (1990; 1998) used only Varimax rotation and apparently assumed non-correlating dimensions. One might ask if the idea of a set of “more or less independent dimensions” (Schommer, 1990) has created an expectation of the dimensions being uncorrelated?

Schraw et al. (2002, p. 265) analysed their material using both orthogonal and oblique rotation, but concluded that the factors did not correlate. Still, their Principal Component Analyses with Varimax rotation revealed a weak positive correlation between the Omniscient authority and Simplicity/Structure of knowledge dimensions (p. 269). Then again, Wood & Kardash (2002, p. 252) found moderate to strong inter-factor correlations using factor analysis. Wood & Kardash (2002, p. 239) also discourage from limiting exploration to orthogonal rotation methods, since forcing inter-correlated factors into an orthogonal model will cause items to cross-load, and the attempt to find a simple structure will fail. Otting et al. (2010) identified a relation between Expert knowledge (cf. Omniscient authority), Certainty of knowledge and traditional conceptions of teaching and learning. Accordingly, they also identified a relation between Learning effort (cf. Learning ability) and constructivist conceptions of teaching and learning (cf. the Constructivist approach identified in the present study).

Thus, since the first explorations in the present study indicated that at least some factors correlate, it was obvious that oblique rotation methods should be used (cf. Finch et al., 2016, pp. 133, 142; Osborne, 2014, pp. 30-33) to allow the factors to correlate, and as it turned out, they did. Throughout the analyses (Tables 2, 3, 4 and Figure 2), the naïvely oriented original dimensions Omniscient authority, Structure of knowledge and Certainty of knowledge correlated with each other. This is in line with the findings by Bråten et al. who merged these dimensions into a factor labelled General Internet Epistemology, but also raises the question if the General Internet Epistemology factor (Bråten et al., 2005; Knight et al., 2017) actually suggests a second-level latent variable?

Across the novel dimensions, correlations occurred between Learning by dialogue and Constructivist approach, although surprisingly weak. Then again, Constructivist approach always correlated strongly to Learning ability, which was also confirmed in CFA (Figure 2). The Internet reliance dimension correlated weakly with Certainty of knowledge and Structure of knowledge (in CFA only with the latter) which may seem surprising but still coherent when taking a closer look at the single items. Believing that you can get almost all information about a subject by googling one or two internet sources, and that they can provide you with a clearer picture (than books), will probably go hand in hand with a belief in knowledge being certain and structured. The overall weak correlations to Internet reliance may also suggest that this dimension develops “more or less independently” as Schommer (1990) originally suggested.

The strong correlation between Constructivist approach and Learning ability is coherent, since believing in everyone’s ability to learn how to learn is part of the constructivist view where the metacognitive component, the learner’s awareness of her/his own learning, is central. The correlation between Constructivist approach and Learning by dialogue is also coherent. The Constructivist approach regards learning as a process of reasoning and construction, where meaning and interpretation is often negotiated in social settings, in dialogue with other learners, and learning is enriched by multiple views and perspectives. The correlation is lower than anticipated, which may be due to Learning by dialogue being represented by only three items.

To conclude, limiting the EFA to orthogonal rotation methods would have concealed the inter-factor relations reported here and perhaps also forced the items to load on inappropriate factors (cf. Osborne, 2014, pp. 30-33).

5.4.1 Scale considerations